All Activity

- Past hour

-

Why 'Retro' Photography Is Back (and How to Get Started With It)

They say the best camera is the one you have with you. But if everyone has at least a pretty good camera in their phone, why would Gen Z (and really, everyone) be drawn to retro photography? Despite the downsides of bulky, standalone film cameras, the aesthetics and tangibility of old school photography still has a lot to offer. When we talk about “retro” photography, there’s a lot we could mean, but there’s a distinct revival trend around 80s- and 90s-style camera gear and aesthetics we want to focus on. Think Polaroid cameras and standalone point-and-shoots. And if you’re not already drawn in by the appeal of tangible photos and nostalgic vibes, allow me to make the case for why you should. What is the appeal of retro photography?There’s a tendency to think of camera technology as steadily progressing forward in a linear fashion. But for creative purposes, it’s often more helpful to think of it in terms of aesthetic eras. Every type of camera has distinct physical qualities that contribute to the appearance of the images they create. And those qualities, over time, become associated with the times and images they capture. For one very obvious example, consider film grain. As this video essay from YouTube channel Nerdwriter explores, film grain was initially just an artifact of how film cameras work. As it became possible to eliminate film grain, however, our brains started associating this grain with older cinema, or a more generic “film look.” With more control over how—and whether—film grain appears in an image, it can be used to deliberately create a chaotic energy in otherwise still footage. This same principle applies to the aesthetics of every era of camera technology. 90s point-and-shoots, for example, were characterized by harsh, unflattering lighting. They typically had poor low-light performance, so a blinding flash was sometimes the only viable source of light. Now, with better cameras and lighting equipment, that look can become a deliberate stylistic choice. Film and instant cameras also provide a tangible experience that forces more deliberate choices. You might notice a lot of your old family photos have a kind of awkward, staged vibe, and there’s a reason for that. When you only had a dozen or so chances to take a picture, you had to be more careful to make sure everyone is posed and in frame, eyes are open, etc. Now, it’s easy to take dozens of photos until you get the right one, but going back to limited use cameras can force you back into thinking ahead about the image you want. And when it comes to instant cameras, there’s nothing quite like the experience of having a physical memento immediately. Everyone has piles of photos in their camera roll that they’ll never look at again, but if someone hands you a Polaroid of you and your loved ones, there’s a solid chance it’s going on your fridge or in your scrapbook. If nothing else, there’s also something to be said for photography without all the AI nonsense that’s so unavoidable now. We have guides on how to take photos on Android or iOS without all the post processing junk. But that can only go so far. On some level, every modern smartphone is doing some kind of digital processing to create a look that’s appealing to the vast majority of users. That can result in a smoothed over, generic look that might not actually be what you want. These are the best retro photography optionsSo, okay, you’re sold on the idea of experimenting with nostalgic aesthetics. Where can you get started? The great news here is that you have well over a century of camera history to play around with. Used camera gear is going to be your friend, and you can often find great tools for relatively cheap either online or at local photography shops. In general, there are a few interesting categories of dedicated cameras to check out: Polaroid-style instant cameras. Through a convoluted process of bankruptcy, acquisitions, and relaunches, Polaroid is back, but it’s not the only game in town anymore. Fujifilm, Kodak, and Lomography all offer their own brands of instant cameras that can snap photos and immediately print them out. Classic digital point-and-shoots. Today’s point-and-shoots are geared a bit more towards professional photographers who want a high degree of control. But you can find a lot of cheap, used digital cameras from the last couple decades that still take surprisingly good photos. In many cases, the digital noise or lens artifacts that would’ve been considered flaws when these cameras were new can offer creative opportunities to get a specific nostalgic look. Ancient smartphones. The earliest smartphones from the late 2000s had some pretty atrocious cameras by modern standards. But they also lacked a lot of the AI and post-processing that’s come to dominate the landscape today. You can find cheap, used smartphones on sites like eBay for as little as $50, which can be a handy way to get some authentically aughts-era photos without having to fake it. Fujifilm Instax Mini 12 Instant Camera Mint Green and Fuji Film Value Pack (60 Sheets) Bundle with Sturdy Tiger Accessories, Carrying Case, Photo Album 64 Pockets $171.95 at Amazon Shop Now Shop Now $171.95 at Amazon Some camera gear—particularly when it comes to DSLR and mirrorless camera lenses and systems—can retain their value for long periods of time. But there’s a wide range of used or outdated camera gear circulating that provide distinct looks and feels. As you explore older cameras, pay attention to the unique aspects of the photos they create, and experiment with how you can use those traits to convey a different story. How to get started with retro photographyCamera and smartphone manufacturers will never let you forget about their latest and greatest hardware, but where do you go to find the best gear from a decade or two ago? The used market for photography equipment can be scattered and fractured, but here are some tips to get started with your hunt: Check out your local photography or thrift stores. Few things can be more useful to a photographer than that one shop in town that always seems to have a used lens or proprietary power adapter that you need for your camera. And sometimes, if they trade in used camera gear, you can find unique devices that you wouldn’t find anywhere else. If you establish an ongoing relationship with your local camera shop, you can even get the opportunity to try out gear you might otherwise have to buy to experiment with. Search specialty camera gear sites. You can always find used cameras on generic auction sites like eBay, but for my money, I like checking out specialty sites like Adorama and Precision Camera. These sites offer a selection of used camera gear, and sometimes receive a better selection than you find on eBay. Every once in a while, I’ll sort the used camera section by lowest price and scroll to see what kind of budget options are on offer. Join a local photography group. Camera gear is expensive, but you don’t always need to invest a ton of money just to explore different aesthetics. In many cities, groups of photography enthusiasts will get together for photo walks or just to meet up and trade tips. Making friends with other photographers is a great way to learn from others and even share experience with each other’s equipment. Even if you don’t want to invest in camera gear specifically, it can be a helpful exercise to look back through photos from past eras and observe what they have in common. Pull out your old family photo albums and compare them to the photos on your phone. Grab a movie from your childhood and examine how it looks different from the polished reboot that just dropped a couple years ago. In photography—and all art—the details matter. A difference in color saturation, noise texture, or even how an image is framed can convey a world of meaning. As you explore the aesthetics of retro photography, your grasp of contemporary visual media can grow, making you better able to express yourself through visual art. View the full article

-

Oracle Launches AI-Powered Smart Assistant to Revolutionize Restaurant Support

Oracle has unveiled a significant technological advancement that promises to streamline operations for small restaurants and food services: the new Smart Assistant integrated into the Oracle Simphony Cloud Point of Sale (POS) system. This generative AI tool aims to empower restaurant teams to handle technical and operational challenges with unprecedented efficiency. For small business owners navigating the complexities of restaurant management, this innovation could be a game changer. With the Smart Assistant, restaurant staff can ask straightforward questions like, “Why isn’t the workstation printer working?” or “Why can’t I log in to Simphony?” The AI delivers immediate, actionable insights tailored to each brand’s specific guidelines, significantly enhancing the operational workflow. “The Oracle Simphony Cloud Smart Assistant is a game-changer for restaurant operators,” said Etienne Piat, vice president of service excellence and innovations at Oracle Consumer Industries. The AI tool not only reduces the workload for IT teams but also empowers staff to resolve common issues on the spot, ensuring that focus remains on providing exceptional guest service. One of the most appealing aspects for small business owners is the Smart Assistant’s ability to minimize dependency on external support. By allowing in-house staff to troubleshoot technical issues, restaurants can save both time and money. The contextual nature of the Smart Assistant means that staff can quickly access personalized support just by clicking on on-screen error messages. This feature transforms potentially stressful situations into manageable tasks, ultimately enhancing customer satisfaction. Early users of the Smart Assistant are already reporting improvements in their operations. The capability to integrate brand-specific standard operating procedures ensures that guidance reflects unique business practices. This offers a consistent approach across various locations, allowing small restaurant chains or franchises to maintain compliance without added complexity. This cohesive support can significantly improve both efficiency and service quality, essential factors for businesses aiming to stand out in a competitive market. Real-world applications of the Smart Assistant are vast. With immediate access to insights, staff can troubleshoot common POS issues such as login failures or device connectivity problems, thus reducing downtime. The built-in generative AI, trained on extensive Simphony documentation, enables meaningful answers to arise in real-time. This minimizes the need for external support calls and boosts first-time resolution rates—two critical metrics for any business looking to optimize operations. However, small business owners should also consider potential challenges. Implementing a new technology often requires training staff to effectively utilize new tools, which can initially divert attention from daily operations. Employees will need time to adapt to this system and provide feedback to refine the accuracy of AI responses. Moreover, while self-service technology can reduce support costs, it’s vital that businesses maintain a balance between automation and human assistance. As this technology becomes more ubiquitous, small restaurants will need to weigh the benefits against the potential disruption that comes with integrating a new system. Nonetheless, the Oracle Simphony Smart Assistant’s advantages appear significant, particularly for businesses striving to maintain peak performance while prioritizing customer experience. The Smart Assistant will be broadly available to Simphony Cloud customers worldwide within the next 12 months, supporting over 100 languages. As small businesses consider their operational strategies moving forward, it’s clear that advancements like these could redefine how restaurants handle in-house technical support and operational efficiency. For more details on how the Smart Assistant could benefit your restaurant, visit Oracle’s dedicated webpage. Image via Google Gemini This article, "Oracle Launches AI-Powered Smart Assistant to Revolutionize Restaurant Support" was first published on Small Business Trends View the full article

-

How to Play Retro Games on Your Modern Phone or TV

We may earn a commission from links on this page. One of my favorite ways to spend my free time is watching old movies. I love catching up on classics on my big OLED screen, and delving into the history of a medium I love. Unfortunately, it’s harder to do that with video games. While pulling up an old movie is usually as easy as finding it on streaming or renting it digitally, old video games are split across a number of different consoles, and you can’t always count on rereleases to make them accessible on modern systems. Luckily, there are still options for those who go looking for them. You can hunt down a vintage system and hook it up to your modern screen using an adapter, yes, but you can also use the power of modern devices to “emulate” these games in virtual environments, often with improvements—and if you do it right, it's all perfectly legal. What is video game emulation?Emulation is a massive rabbit hole, and can get about as deep as you want it to be. I’ve been using it for decades, and I’m still learning new things. But there are some basics you should know that will help you get started, including how it works, its legal status, the drawbacks of not playing on real hardware, and the benefits it offers beyond simple convenience. Credit: Michelle Ehrhardt Essentially, emulation uses the power of modern machines to brute force virtual environments that are close enough to real hardware that files designed for it think they’re running on the real deal, and will boot up like they are. Usually, this means emulators won’t come out until one or two generations after a console's official release, but there are now emulators for everything from the Nintendo Entertainment System to the Nintendo Switch (which runs on older hardware than you might think). Granted, you might expect Nintendo’s not too happy about that, but the kicker is that there’s not a lot the company can do about it (aside from trying its best to scare emulator developers). A court case from way back in the day ruled that, so long as emulators don’t distribute copyrighted software, they’re allowed to write their own code that mimics official hardware all they want. That means you’ll need to provide your own games for your emulators, and in some cases BIOS (or operating system) files. To stay on the right side of the law, most emulator guides won’t tell you how to go about that, but there’s at least one method that’s totally fair game. It turns out that U.S. law allows you to make your own backup copies of games you own, so long as you don’t distribute them. With that, there are plenty of legal devices that will help you rip your game files from your own cartridges and discs, some of which I cover here. Some emulators are even so advanced that they’ll play your real discs if you simply put them in your PC’s disc drive. Still, even if everything’s above board, there are a few drawbacks to emulating rather than playing on real hardware. The biggest issue you’ll notice is with accuracy, as some games might have graphics or audio bugs. Input lag is also a common complaint, as emulators often need extra time to register your button presses, since they need to both read them and feed them through your software. Finally, some games might not even run on emulators at all, especially ones with unusual requirements. The original Xbox, for instance, is notoriously difficult to emulate. On the flip side, though, there are benefits to emulating that real hardware can’t replicate, and they mostly come from the extra power of your modern device. Emulated games can often run smoother than on real hardware, hitting higher frame rates. You’re also usually able to render your games at higher resolutions than originally intended, basically playing them in HD. And most importantly for difficult games or flexible play sessions, you can use save states, which allow you to quickly save your current place in a game to a file, and reload it on demand. This, in turn, allows you to save your game whenever, outside of whatever save system is built into it. It’s perfect if you only have a few minutes to play, or if you’re about to fight a difficult boss in a punishing retro game and don’t want to replay the whole level if you mess up (no judgment here). Because save states essentially take your emulator back in time, they can introduce instability, so it’s advised to use them in addition to more traditional saves, rather than as a full-on replacement for them. Emulators for more modern, difficult-to-run HD systems also may not support save states. Still, those are enough improvements that I often prefer playing retro games through emulation, even if I have real hardware available to me. And while some of those enhancements are available on official emulation—Nintendo Switch Online has save states, for instance—not all of them are. I haven’t even gotten into widescreen hacks, which lets you play old 3D games in a more modern aspect ratio without stretching the video, or HD texture packs yet. Benefits like these are why, if you’re willing to put in a little elbow grease, unofficial emulators are well worth trying out. What you need to start emulatingThe fans who develop emulators are crafty, and they’ve had plenty of time to refine their work, so most modern devices are able to emulate retro games to some degree. It’s become a running joke that Doom will play on just about anything, including a pregnancy test. But from a realistic point of view, there are a few things you’ll probably want on hand before you get started. Credit: Michelle Ehrhardt If you’re playing on a laptop or a desktop computer hooked up to a monitor, you’ll probably want a controller. Most emulators will support mouse and keyboard controls if you truly can’t get one, but using a controller will help a lot with the old school console experience. Aside from that, though, you might be all set. I have a desktop PC that’s pushing 10 years old at this point, and it’s still able to emulate games through the PS2 and GameCube era at full speed, while upscaling them. Beyond that is when emulation tends to get a bit more demanding, but for retro games, you probably won’t need to upgrade your machine. If you want to play on a TV, though, you could have a bit more of a shopping list in store for you. In addition to a controller, you’ll also need some type of computer to emulate your games with, and while you can drag a laptop or desktop PC into your living room, it’s often not the most convenient solution. Instead, I suggest getting either a docked Steam Deck or a Raspberry Pi. The former’s a bit pricier, and has had stock issues during the RAM crisis, but it’s also compact, plenty powerful when it comes to emulation, easy to output to a TV via a dock, and can play PC games natively, too. With the right Steam Deck emulation setup, you can essentially turn it into your own homebrew Nintendo Switch, but for all your consoles. The latter, meanwhile, is far cheaper (although its price has also been inflated by the RAM crisis) and smaller, but will take a bit of knowhow to set up and can struggle when emulating systems released after the PS1. Your best bet if you choose to go this route is probably to buy a Raspberry Pi kit, as these will come with a case, cables, storage, and often a fan to get you started. You can also sometimes find these cheaper than a Raspberry Pi motherboard on its own. But again, the world is your oyster when it comes to which devices you want to emulate with. It’s possible to emulate on a streaming stick or box, too. Generally, if a device has a motherboard and can display a video signal, people will usually find a way to game on it. To wit, you should look into emulating on mobile devices, too. These days, it’s possible to both emulate on an iPhone and on Android, and there is a whole slew of handheld gaming consoles that essentially build controllers into phone hardware running Android to give you an experience similar to a DS or PSP. These can be a great way to play portably, whether using touch controls, a Bluetooth controller, or built-in controls. And if you get a USB-C dock, you can then connect these devices to the big screen to play on them when you get home. You can even get a cheap handheld that runs Linux, for a similar experience to a Raspberry Pi while on the go. Read on here for more details about portable emulation. Which emulators to get, and how to set them upNow, it’s time to actually install your emulators, of which you have many choices. I've compiled a list of the apps you’ll probably be using to emulate your games, depending on the platforms you're interested in, before going into how to get them: Retroarch: An app with multiple emulator “cores” in it, that can run games from most systems up through the PS1 era, including the Super Nintendo and Sega Genesis. Duckstation: A standalone app for emulating PS1, with enhanced stability and graphics features compared to Retroarch. Mupen64Plus: A standalone app for emulating Nintendo 64, with enhanced stability and graphics features compared to Retroarch. Flycast: A standalone Sega Dreamcast emulator with support for upscaled graphics and widescreen hacks. MelonDS: A standalone Nintendo DS emulator with community-driven forks that can run on two separate displays for a more authentic experience. Azahar: A standalone Nintendo 3DS emulator with community-driven forks that can run on two separate displays for a more authentic experience. Supports custom graphics drivers on mobile. PPSSPP: A standalone PSP emulator with a highly themed user interface reminiscent of the original console. Dolphin: A standalone GameCube and Wii emulator with high stability, support for custom mobile graphics drivers and upscaled graphics, and the ability to use motion controls. Usually preferable to emulating PS2 or Xbox, if playing a multi-platform game. PCSX2: A standalone PS2 emulator with support for upscaled graphics. Best used for PS2 exclusives. Not available on mobile. NetherSX2: A standalone PS2 emulator for mobile. Many of the same features as Dolphin, but lower stability, and no motion control or custom driver support. Cemu: A standalone Wii U emulator with support for custom mobile graphics drivers and upscaled graphics. No save state support. Requires a high-end machine. RPCS3: A standalone PS3 emulator with support for upscaled graphics, custom mobile graphics drivers, and save states. Requires a high-end machine. Eden: A standalone Nintendo Switch emulator with support for upscaled graphics and custom graphics drivers on mobile. No save state support. Requires a high-end machine. Phew, that’s a lot. On the plus side, most of these emulators are available for Windows, Mac, Linux, and Android, although iOS users have a bit less to pick from, as Apple restrictions on certain programming techniques mean higher-end devices like GameCube and beyond are difficult to run on its phones. On the plus side, iOS does have access to some potentially more convenient options for older systems, like Delta, which comes with cute touchscreen control overlays built-in. Now, you could install these apps one-by-one, point them at your game files (which you’ll usually be guided through as part of setup), and play your games by booting up the specific emulator you want and picking the game you want to play from a list. But not only is that slow and inconvenient, it’s not as pretty and is less like using an actual retro console. To solve that problem, we have installers and frontends. Emulator installersIn this case, installers are programs that will help you set up all your emulators in one fell swoop, or will sort your games into collections by system or genre for you, and will boot you into the appropriate emulator when you select a game. For installers, you have a few options. My favorite is Emudeck, which despite being named after the Steam Deck, will run you through a simple setup wizard that will install any emulator you could possibly want, whether you’re on steamOS, Linux, or Windows. There’s also an Android version in the works, and you can get early access to it if you subscribe to the development team’s Patreon. Alternatively, there’s Retrodeck. This is a Linux-only tool, but some users prefer it to Emudeck thanks to more fluid hotkey settings and a less bug-prone (but potentially slower) update process. Nicedeck is another alternative that aims to hit a middle-ground between Emudeck and Retrodeck, and conveniently is the only one of these options that also works for Mac. As someone who just manually installed a bunch of Android emulators one-by-one, I would highly recommend using an installer to automate the process instead—´specially because many Android emulators need to be sideloaded, something that is about to get harder starting next year. An installer will also usually help you set up configurations like individual desired aspect ratio and upscaling settings for each system you want to play, too, which will save you some tedious trips to each individual emulator’s settings menu. But just because your emulators are installed doesn’t mean we’re done yet. Instead of having to bounce from emulator app to emulator app and scroll through what can often be ugly built-in menus, let’s put all your games in one convenient, easy-on-the-eyes place. Emulator frontendsA frontend is an app that will sort your games by system, or by custom collections you set up, like genre. You’ll choose a game from one of its many lists, and the frontend will tell the appropriate emulator app to boot up the game. Then, when you’re done gaming, your emulator will take you back to your frontend. It’s a much more intuitive and console-like experience, and people have created plenty of themes to make them look just as nice as official console menus. Many frontends even come with “scrapers” built in, so they can fetch and display box art next to your games. Credit: Michelle Ehrhardt The most common and robust choice here is ES-DE, or Emulation Station Desktop Edition. It’s what I use personally, and comes packaged with installers like Emudeck and Retrodeck. It has the most configuration options available for it, but can be a bit slow to boot. Also, while it’s free on Windows, Mac, and Linux, a small one-time Patreon donation is required to get the app for Android. ES-DE alternatives on desktop are rare, but options like LaunchBox may be preferable for some users. Other frontend apps are more common on Android, as ES-DE took some time to come to Android, and some users prefer a more playful interface while on mobile. Popular free options include Daijisho and Beacon, although I’m particularly interested in Cocoon, which is modeled after the Nintendo 3DS menu and has built-in dual screen support. Another option, if all of this sounds like too much setup, is to use Batocera. This is a Linux install that essentially packages largely pre-configured emulators for a wide variety of systems alongside a customized version of Emulation Station. Basically, you install it on your compatible device and boot into it separate from your main operating system, so everything lives in its own confined home. While that means it’s a bit limited, it’s also mostly plug-and-play. It’s also possible to run Batocera off a USB stick or SD card, if you don’t want to install it onto your device’s internal storage. How to choose and install emulators and frontends for different systems and devices could be a whole series of articles on its own, but the community is welcoming, and is doing its best to make emulation easy and available to as many people as possible. The above programs should be enough to get you started, but if you have additional questions, experts like Retro Game Corps and subreddits like r/emulation are always there to help you out. How to make your games look old school (or HD) Credit: Michelle Ehrhardt When emulating a game, you have three options: You can go with the raw emulation output, which will by default usually mimic a console’s native resolution but might not look fully accurate depending on the screen you’re playing on; you can upscale the resolution for a more HD image, and can even apply fanmade texture packs to make individual games look even crisper; or, you can turn on a CRT filter to try to get a more retro feel, as well as help pixel art or low polygon models look a bit smoother. Frankly, this is another area where it’s possible to go on for days. You can mix and match different options from these approaches to your heart’s content, and Retroarch alone has hundreds of filter and shader options built-in (options do differ from emulator to emulator). Improving the look of 3D emulated gamesFor 3D games, the idea is to try to get a more modern experience. Widescreen hacks are a good place to start. These extend the aspect ratio to 16:9, then apply tweaks to the emulation so that the screen renders more of the play environment instead of simply stretching the default 4:3 image. It doesn’t work for every game, and can break the design in others (Resident Evil has very purposeful camera angles), but it’s often worth trying, especially in games where situational awareness is helpful, like platformers. HD Texture packs, meanwhile, help clear up low-resolution HUDs or 2D assets (which are still quite prevalent in 3D retro games) that won’t be covered by upscaling. These need to be developed on a per-game basis, so you’ll need to search for them, but a popular example is Henriko Magnifico’s 4K Zelda texture packs. Personally, I do think these can sometimes interfere with a developer’s intended art style too much, but some people swear by them. Improving the look of 2D emulated gamesFor 2D games, I like to try to make my game look like it’s playing on an old-school TV, and that’s not just for flavor. Pixel art was designed with CRT televisions in mind, which would smooth and blur harsh edges together to make pixels look more hand drawn (here’s a good example). You lose that effect if you just use raw emulation footage on a modern television, but you can mostly get it back with the right filters. This is far from a solved issue, but so far, my favorite option is the zfast-crt.slangp shader in Retroarch (found in the Quick Menu under Shaders). This is a subtle effect that feels far more accurate to me than the CRT filters often included in official retro game collections, and it’ll work on any device that runs Retroarch. What’s great is that, because this is included with Retroarch, it’ll also work for any system that Retroarch supports, which includes most retro consoles you would play 2D games on. But CRTs provided an additional benefit beyond making pixel art look nice. Because of the way they scan in their images, they’re highly resistant to motion blur. If you have a device with a 120Hz screen, you can mimic this using a technique called black frame insertion. This technique inserts a single black frame into every other frame of your video output, breaking up the image and helping your eyes reset. While this will slow down your gameplay on a standard 60Hz screen, a 120Hz screen will let you use black frame insertion while still getting 60 fps gameplay. This is built into a toggle in Retroarch’s default Settings > Video > Synchronization page, but to be honest, I find this implementation comes with some pretty intense flickering. Instead, I prefer the crt-beam-simulator.slangp shader developed by the folks over at Blur Busters, which has a more subtle effect that looks more like the old school TVs I remember from back in the day. Getting this running in Retroarch takes a few extra steps, but luckily, Retro Game Corps has a great video walking through it, including how to tweak it to your liking and combine it with the zfast-crt.slangp if you’d like. With tools like these, it’s clear that the appetite for playing games from older consoles isn’t going anywhere anytime soon, even if it’s harder than pulling up an old movie on Netflix. Whether you’re on PC, Mac, a Steam Deck, or mobile, you’ve got plenty of options already, even as hardware costs rise. From where I'm sitting, the frontier for retro gaming looks bright. View the full article

-

Where paid media optimization should stop in long sales cycles

In long sales cycles, a lot of what happens after lead submission involves people. When you optimize campaigns to final sales, you’re teaching the ad platform to respond to how well the sales team performed that month rather than lead quality, and that’s a problem no amount of campaign changes will fix. The common advice is to “optimize the full funnel” (i.e., track media spend to revenue, optimize campaigns to sales, etc.). But beyond lead capture, most of what drives sales has little to do with your paid media. It’s about who’s on the sales team, how busy they are, and dozens of other factors you can’t influence through targeting or creative. When your sales team becomes the signal I’ve spent over 15 years in financial services marketing, but this isn’t unique to mortgages or insurance. If your sales process relies heavily on people, you’ll recognize this immediately. In most businesses, there’s someone like Dave. In my case, he’s a mortgage adviser, but in yours, he might be your top enterprise sales rep, your star business development manager, or your best project estimator. He closes deals at twice the rate of his colleagues, not because he gets better leads, but because he’s naturally gifted at building rapport, asking the right questions, and guiding anxious customers through difficult decisions. However, Dave isn’t always there. Sometimes he’s on vacation, sometimes he might leave the company for a better opportunity, or sometimes your business hires three more Daves. The makeup of your sales team likely changes constantly. You might have more experienced closers one month, fewer the next, a recruitment drive that brought in several new starters, or Dave and two of his colleagues leaving within a month of each other. Sales rates can swing dramatically based purely on who’s in the office, regardless of lead quality. This can lead to targeting problems. For example, when the conversion rate drops because Dave’s away and a junior team member is covering his accounts, the algorithm sees it as a targeting problem rather than a staffing issue. If you’ve set your campaigns to optimize for sales, it thinks, “Our targeting stopped working. These clicks are lower-quality for this conversion action now. We should shift spend away from these audiences.” Eventually, this could result in keywords that were previously working well being turned off, audiences that were driving sales volume no longer being bid for, and, eventually, a decline in the entire account’s performance. But the leads haven’t changed, only the team has. Dig deeper: How to diagnose and fix the biggest blocker to PPC growth Your customers search everywhere. Make sure your brand shows up. The SEO toolkit you know, plus the AI visibility data you need. Start Free Trial Get started with Operational factors that distort your conversion data It’s not just the sales team makeup either. Let’s say: The team gets slammed in Q4 as everyone tries to close before year-end, response times stretch from two days to over a week, and customers get impatient and look elsewhere. Perhaps market conditions shift, and your most competitive product gets pulled. Or summer vacations mean the team is running short-handed, and some leads go cold before anyone contacts them. Then September comes and everything bounces back to normal. It goes beyond the day-to-day. Budget approvals get delayed, product ranges change, and planning delays push projects back. The specific reason varies by business, but the effect on your conversion data is always the same. The algorithm ends up thinking targeting got worse when, in fact, the team was just busy with leads from other sources. When Dave becomes a superhuman: The Santa Claus Rally The Santa Claus Rally, also known as the December Effect, is the best example I’ve seen of how human behavior can throw off algorithmic targeting. Every December in financial services, something strange happens. In the third week of December, conversion rates from lead to sale spike dramatically. We’ve seen increases of up to 150% compared to normal weeks. If campaigns are optimized for sales, the algorithm thinks, “Whatever we’re doing this week is working incredibly well!” Then the holiday week arrives, and everything crashes, with conversion rates plummeting to a fraction of normal levels. None of it has anything to do with paid media. In week three, Dave and his colleagues are in target-hitting panic mode. End-of-year bonuses are on the line, and there’s one final push before the holiday break, so they’re calling leads faster, following up more aggressively, and closing deals they might typically have let simmer. Dave is working like a machine. Then the holiday week arrives, and everyone’s mentally checked out, customers aren’t answering phones, and Dave has finally taken time off. The team that’s still at work is thinking more about family get-togethers and less about targets. The lead quality, targeting, and ads haven’t changed. The team is just working at different levels of intensity due to seasonality. The algorithm overpays for normal performance and underbids for identical audiences, purely based on when Dave and his team take their vacations. Dig deeper: How to analyze your marketing funnel and fix costly drop-offs Where optimization should actually stop So if optimizing for sales is being distorted by things outside your control, how should you draw the line? How can you balance this lead distortion and still drive the right type of leads? The answer is your last point of control, which, for these kinds of sales, means at lead submission. But not just simply counting leads. Instead, value them based on both likelihood to convert and the commercial value of the end sale. The other issue is that most high-value businesses only generate a handful of sales per month, which isn’t enough data for automated bidding to learn anything useful. Lead valuation also solves this issue by providing the platform with hundreds of conversion events rather than a few sales. This means automated bidding can actually function properly, campaign and audience testing can become meaningful, and the data stays reliable. You’re optimizing to lead quality before Dave and the sales team get involved. To be clear, importing downstream conversion stages or revenue into ad platforms can be extremely powerful. But optimization to those signals only works when volume is sufficient, conversion lag is manageable, and the sales process is stable. Get the newsletter search marketers rely on. See terms. How to build lead valuation The starting point is your historical data, ideally 12 months of it, though you can work with six. You need to understand which leads actually closed, what they were worth, and what they had in common at the point of inquiry. For financial services, it’s things like loan amount and term. For B2B, it might be company size or sector. For construction, it’s usually project size and urgency. From there, it’s about grouping leads by their likelihood to close to a sale and by what a typical deal size looks like, and then assigning each group an expected revenue value. The check to make sure it’s working as expected is simple. The total estimated value you assign to your leads over a period should roughly match the revenue they actually generated. If not, the model needs work. Ideally, you should revisit it at least quarterly as your campaigns and operational factors change. As an example, you might end up with a high-likelihood lead worth $850, a mid-range lead at $420, and a lower-likelihood lead at $120. Once you have that, set up your conversion tracking to pass the expected value back to the platform on your conversion action and use value-based bidding (target return on ad spend in Google Ads) to point the algorithm toward the leads that are actually worth chasing. Dig deeper: How to make automation work for lead gen PPC Optimize for what you can control “Optimize the full funnel” sounds sensible until you realize how much of that funnel you don’t actually control. You can influence the targeting, the creative, the landing page, and the experience that gets someone to submit a form. After that, it’s over to Dave and the sales team, and dozens of other factors that have nothing to do with your campaigns. When you expect an algorithm to optimize for things it can’t see, it will start drawing the wrong conclusions, chasing the wrong audiences, and getting worse over time. The answer isn’t to stop measuring what happens after lead submission. You absolutely should continue measuring, as those numbers can tell you a lot about what’s going well and what might need to be corrected for. Remember: When lead quality stays steady, but sales drop, that’s an operations issue, not a paid media one. When both drop at the same time, look at your campaigns. When sales spike, but lead quality is flat, that’s Dave having a great month, not your targeting. That visibility is genuinely helpful, but it just shouldn’t be what you’re optimizing to. Build lead valuation, feed expected values back to your platform, and let the algorithm do what it’s actually good at: finding people who look like your best leads. Leave the rest to Dave. Know where your control ends, as that’s where optimization should stop. View the full article

-

my boss asked me to mentor my coworker, but it’s really my boss who needs mentoring

A reader writes: About three years ago, we had a new manager start at my job, Fergus. Fergus is a very nice guy, but has never been a manager before. He delegates some of his core tasks to us, and seems to struggle with things like project management, clear and proactive communication, and HR-type stuff. It doesn’t happen all the time, but when he has a tricky situation, he will come to me and ask my opinion on how to handle it, and I coach him on what to say and what actions should come next. (Before I started here eight years ago, I’d been a department head at my previous company. That place was toxic as hell, and I happily took a step down out of management to get out of there.) Two weeks ago, Fergus asked me to be a mentor to one of my colleagues, Chip. Please note that there is no real hierarchy in our department; other than Fergus, we are all peers on the org chart. Chip is older than me, a gem, and also a bit quirky. Most of Fergus’s “what should I do?” questions in the past were in relation to Chip. We do very different jobs within the department, but I agreed to the mentorship as long as it was what Chip wanted. Chip just wants the drama to go away so he can focus on his work. All agreed to the mentorship. For the last week, I’ve been talking with three people who Fergus told me had lodged serious complaints against Chip, so I could get an idea of what goals to work towards. The first person gave me a lot of valuable feedback about how, yes, there were some instances that Chip could have handled better, but a lot of the issues could be solved by having more consistent department procedures and communications tools. The second person had a lackluster interaction with Chip two years ago. They worked through the issues that led to the misunderstanding, and she showed me an email thread that showed the new procedures were working fine and she was satisfied. The third person had no idea why I was asking her questions. She had no issues with Chip or anyone in our department. She had never spoken to Fergus. As far as we can tell, a few weeks ago she was raging about a bad experience with an external vendor, and one of her office mates is Fergus’s spouse. (Many yikes happening here, and I had to reassure her that she had done nothing wrong and no one was in trouble.) At this point, I think it’s clear that while Chip could use a bit of mentorship on “reading the room” and working with sensitive customers, most of the work really needs to happen with Fergus. We need better department procedures, and Fergus needs to work on his own leadership skills. He’s a nice guy and I think as much as he wants to do well here, he seems to have some sort of anxiety around HR-type things. These instances that are looming large in his mind are old news or nonexistent issues based on rumors and assumptions. I agreed to the mentorship because, while I do believe that Fergus should be the one doing this in theory, I want Chip to stay and be successful and I don’t think that will happen if Fergus tries to mentor him. So … how do I “manage up” with Fergus? I just got done teaching my whole department about Change and Project Management because too many situations had happened because we lacked those processes. Now I’m doing HR type stuff. I’ve drawn the line that I’ll only interfere in management if it is negatively impacting me. I am looking for a script for how to talk to Fergus about his own leadership journey while also not becoming his mentor. I can’t go to my grandboss, because Fergus and his spouse are very well-connected and I don’t want to spend my political capital there. I just want to be left alone to do my work. Nooo, don’t get involved in this at all. You’re not Chip’s manager, you’re not being paid to do this work, and the fact that Fergus would prefer not to do it and is bad at it doesn’t make it your job. If your company wants it to be your job, they need to pay you for it and give you a level of authority that would make this sort of coaching and intervention appropriate. It’s not inherently inappropriate to be asked to coach or mentor a peer, but this is much more than that — it’s not appropriate for you to be digging into other people’s concerns about a peer, even though Fergus asked you to. That’s squarely Chip’s manager’s job. Unfortunately he doesn’t have a manager who’s willing or able to do it, but that doesn’t mean you should step up and do it yourself. Tell Fergus you talked with the three people he suggested and learned that two of them didn’t have issues with Chip at all and the other raised issues that could be solved by more consistent department procedures and communications tools. Then say that in doing this, you realized that it didn’t feel appropriate for you to dig into a peer’s performance in that way and you’re not comfortable staying in that role for a peer, so you’re going to officially hand that responsibility back to Fergus. If you’d like to do this stuff, you could say, “If at some point there’s room to create an additional manager role on the team to work on issues like this, I’d definitely be interested in being considered for that. But otherwise I realize I’ve overstepped and prefer to stick to being Chip’s peer.” If Fergus tries to tell you that you’re not overstepping because you deputized you to act in his place, you can say, “I appreciate you putting that trust in me. I’m really not comfortable doing that without formally having a job that would give me standing to do that kind of management with a peer. But if it’s an option to formalize that kind of arrangement, I’d love to talk about that.” It is similarly not your job to talk to Fergus about his own leadership deficiencies. You can certainly flag that your team needs better processes for X or that situation Y is a problem, and if he expresses uncertainty about how to handle those things, there may be room to say at some point, “I know there are some great classes on management that HR has sent people to for things like this” (or something similar). But anything beyond that is getting into coaching Fergus on management, and that’s something that needs to come from above him. Not only is it inappropriate for you to try to do it from below, but if you did try, it’s likely to mean (a) tons of unpaid labor from you, (b) probably with very little payoff (because if Fergus hasn’t figured out after three years that he needs to learn to manage, it’s highly unlikely that you’ll be able to cajole him into it from below), and (c) is highly likely to be a huge exercise in frustration because it will allow you to think this stuff might change when in fact very little probably will. (I have been in exactly those shoes before, and it is a fruitless, frustrating path that will suck out all your energy and not pay you for it.) You said you just want to be left alone to do your work, and the good news is: you can be. But to do that, you need to decline Fergus’ attempts to delegate his management work to you, and you have to accept that the department is probably going to stay poorly run. The post my boss asked me to mentor my coworker, but it’s really my boss who needs mentoring appeared first on Ask a Manager. View the full article

-

As the U.S. cripples Cuba with a blockade, Trump gives a Russian oil tanker access

President Donald The President on Sunday night said he has “no problem” with a Russian oil tanker off the coast of Cuba delivering relief to the island, which has been brought to its knees by a U.S. oil blockade. “We have a tanker out there. We don’t mind having somebody get a boatload because they need … they have to survive,” The President told reporters as he flew back to Washington. When asked if a New York Times report that the tanker would be allowed to reach Cuba was true, The President said: “I told them, if a country wants to send some oil into Cuba right now, I have no problem whether it’s Russia or not.” On Monday, Russia’s Transport Ministry said the oil tanker Anatoly Kolodkin arrived at the Cuban port of Matanzas carrying “humanitarian supplies” of about 730,000 barrels of oil. The vessel is sanctioned by the United States, the European Union and the United Kingdom following the war in Ukraine. Kremlin spokesman Dmitry Peskov said Monday that Russia had previously discussed its oil shipment to Cuba with the United States. “Russia сonsiders it its duty not to stand aside, but to provide the necessary assistance to our Cuban friends,” he told reporters. The President, whose government has come at its Caribbean adversary more aggressively than any U.S. government in recent history, has effectively cut Cuba off from key oil shipments in an effort to force regime change. The blockade has had devastating effects on the civilians The President and Secretary of State Marco Rubio say they want to help, leaving many desperate. Islandwide blackouts have roiled Cubans already grappling with years of crisis, and a lack of gasoline and basic resources has crippled hospital and slashed public transport. Experts say the anticipated shipment could produce about 180,000 barrels of diesel, enough to feed Cuba’s daily demand for nine or 10 days. Cuba has long been at the heart of geopolitical tug-of-war between the U.S. and Russia, dating back decades. The President on Sunday dismissed the idea that allowing the boat to reach Cuba would help Russian President Vladimir Putin. “It doesn’t help him. He loses one boatload of oil, that’s all it is. If he wants to do that, and if other countries want to do it, it doesn’t bother me much,” The President said. “It’s not going to have an impact. Cuba’s finished. They have a bad regime. They have very bad and corrupt leadership and whether or not they get a boat of oil, it’s not going to matter.” He added: “I’d prefer letting it in, whether it’s Russia or anybody else because the people need heat and cooling and all of the other things.” Associated Press reporters Megan Janetsky and Andrea Rodríguez contributed to this report. —Darlene Superville, Associated Press View the full article

-

UK government on verge of full nationalisation of British Steel

Talks with Chinese owner Jingye continue while losses mountView the full article

- Today

-

AI citations explained: how they work and how to get them

AI search is changing how visibility works. Users are getting direct answers instead of clicking links, which means fewer chances to drive traffic. In this shift, AI citations are becoming the new gatekeepers, deciding which sources get featured in answers. Over the past year, search has moved from ranking pages to selecting sources, pushing us from traditional SEO toward AI-driven visibility. In this article, we’ll explain what AI citations are, how they work, and how you can earn them. Table of contents What are AI citations? How AI citations impact brand credibility How AI citations work: a complete breakdown Strategies to get cited by AI models Tracking AI brand presence with Yoast FAQs on AI citations AI citations: The currency of the AI-driven web Key takeaways AI citations are references that search engines include in AI-generated answers, enhancing credibility and visibility This shift in visibility moves from traditional SEO ranking to AI-driven inclusion as a key factor for brand presence AI tools retrieve information from diverse sources, with citations coming from both top-ranking and deeper pages To earn AI citations, create valuable, structured content and establish topical authority across your niche Tools like Yoast AI Brand Insights help track your AI visibility and citation presence across platforms What are AI citations? Citations have always been a way to show where information comes from and why it can be trusted. The same idea now applies to AI-generated answers. AI citations are the references that search engines and AI tools include to support the answers they generate. When a tool like ChatGPT responds to a query, it often points to specific pages or sources that back up the information. These references act as signals of credibility, helping users understand where the answer is coming from and giving them a way to explore the original content. In simple terms, if your content is cited, it becomes part of the answer itself, and not just another link in the results. AI citations vs the blue link era If AI citations determine what gets included in answers, it’s worth asking how this differs from how search used to work. Because this isn’t just a feature update, it’s a shift in how visibility itself is earned. In the traditional model, ranking higher meant getting more clicks. In AI-driven search, being selected as a source matters just as much, if not more. AspectTraditional SEOAI citationsVisibilityBlue linksAi-generated answersTrafficClick-drivenInfluence-drivenAuthority signalBacklinksCredibility and accuracyUser actionVisit websiteConsume instant answers This doesn’t mean traditional SEO is going away. Rankings, indexing, and backlinks still play a critical role. However, how that value gets surfaced is changing. Instead of just competing for position on a results page, you’re now competing to be part of the answer itself. Do check out Alex Moss’s talk at BrightonSEO, 2025, on the evolution of search intent and discoverability. Where do AI citations come from? Before you try to earn AI citations, it’s important to understand where they actually come from. Because you’re not just competing with other blog posts, you’re competing with an entire information ecosystem. AI models pull their answers from a mix of sources: Web content: Blog posts, guides, landing pages, and long-form articles Structured sources: Platforms like Wikipedia, documentation hubs, and product data feeds Forums and UGC: Discussions from Reddit, Quora, and Stack Overflow First-party data: Brand websites, help centers, and official resources How the sources are selected is quite interesting. A recent analysis of Google’s AI Overviews found that citations don’t strictly come from top-ranking pages. In fact, only about 38% of cited sources rank in the top 10 results, meaning a large share comes from deeper pages or alternative formats. Another key insight by CXL: AI models tend to prioritize clear, early answers within the content, with a significant portion of citations pulled from the top sections of a page rather than from deeper sections. The takeaway is simple. AI systems are not just ranking content; they are selecting the most useful pieces of information across formats and sources. That means your content is competing not only for rankings but also for clarity, structure, and trustworthiness across this entire ecosystem. Types of AI citations Not all AI citations look the same. Depending on the query and intent, AI models pull in different types of sources to support their answers. Broadly, you’ll see three main types: Informational citations These are the most common. AI tools refer to blog posts, guides, and educational content to explain concepts or answer questions. If someone asks, “what are AI citations,” the sources cited will typically be long-form, explanatory content. Product citations These show up in commercial or comparison queries. For example, “best SEO tools” or “top project management software.” Here, AI models cite product pages, listicles, and review-based content to support recommendations. Multimedia citations AI doesn’t rely solely on text. Videos, images, and other visual formats can also be cited, especially when they better explain something than text alone. Think tutorials, walkthroughs, or demonstrations. How AI citations impact brand credibility AI citations don’t just drive visibility. They shape how your brand is perceived before a user even visits your website. When your content is cited in an AI-generated answer, some of that trust transfers to your brand. You’re no longer just another result on a page; you’re part of the answer itself. And that changes how users interpret your authority. This also means buyer decisions are starting earlier. Users may form opinions, shortlist options, or even make decisions directly from AI responses, without ever clicking through. If your brand isn’t cited, you’re not part of that consideration set. There’s also a strong signal of relevance at play. Being included in AI answers suggests that your content is not just optimized, but genuinely useful in context. It tells both users and algorithms that your brand deserves to be surfaced. Over time, this creates a compounding effect. The more your content is cited, the more your brand becomes associated with specific topics. That repeated exposure builds familiarity, authority, and trust. How AI citations work: a complete breakdown So far, we’ve talked about what AI citations are and where they come from. But how do AI systems actually decide what to cite? Let’s break it down. AWS At a high level, most AI-powered search systems follow a retrieval-and-synthesis process, often powered by approaches such as Retrieval-Augmented Generation (RAG). In simple terms, they don’t just generate answers; they find, evaluate, and assemble information from multiple sources before deciding what to cite. Here’s what that process looks like in practice: 1. Query understanding Everything starts with intent. The AI interprets what the user is really asking, whether it’s informational, navigational, or commercial. This step shapes what kind of sources it will look for. 2. Retrieval of sources Next, the system pulls in potential sources from multiple places: Web indexes Training data patterns Live retrieval systems (depending on the model) This is where your content first enters the consideration set. 3. Source evaluation Not all sources are treated equally. AI models evaluate them based on: Relevance to the query Authority and trust signals Clarity and structure of information Entity-level trust (how credible the brand or author is) When you look at these signals closely, they all point in one direction. Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) play a central role in determining what gets cited. In other words, AI systems aren’t just looking for answers; they’re looking for reliable sources behind those answers. 4. Answer synthesis Instead of showing individual links, AI combines insights from multiple sources into a single, cohesive answer. This is where your content may be used, even if it’s not directly cited. 5. Citation selection Finally, the model decides which sources to: Explicitly cite (with links or references) Implicitly use (without direct attribution) This is the step that ultimately determines your visibility. How this differs across AI systems While the core process is similar, different AI tools prioritize different parts of this pipeline. AI systemsHow it handles citationsChatGPTLeans more on third-party sources and consensus, such as directories, reviews, and aggregator sites, rather than relying heavily on brand-owned content.PerplexityFocuses on retrieval-first behavior, pulling from a wide range of web sources and surfacing multiple citations to support transparency (strong emphasis on external validation).GeminiPrioritizes brand-owned and structured content, especially pages that are clearly organized and easy to interpret. Must read: Why does having insights across multiple LLMs matter for brand visibility? Key signals AI models use for citing content Even though the process is complex, the signals that increase your chances of being cited are surprisingly consistent: Well-organized structure: Clear headings, bullet points, and logical flow make it easier for AI to extract information Evidence-based reasoning: Content that references data, sources, or supporting claims is more likely to be trusted Timeliness and relevance: Fresh, updated content often gets prioritized, especially for evolving topics Authoritative voice and depth: Content that demonstrates expertise and covers a topic comprehensively stands out Topical consistency: Brands that consistently publish around a topic are more likely to be recognized as reliable sources The key takeaway here is simple: AI citations are not random. They are the result of a structured evaluation process in which clarity, trust, and relevance determine who is included in the final answer. Must read: How to use headings on your site Strategies to get cited by AI models So far, we’ve looked at what AI citations are and how models decide what to cite. The next question is the one that matters most: how do you actually get cited? Because this isn’t just about creating content, it’s about sending the right signals that your content is worth citing. Here are some strategies that can help you do exactly that: 1. Create citation-friendly content Citation-worthy content goes beyond surface-level answers. It offers original thinking, clear explanations, and real value, helping AI models support their responses with confidence. In other words, it’s not just optimized, it earns references by being genuinely useful. The following content types consistently get cited by AI models: Content typeWhat to writeWhy AI loves themOriginal researchStudies or data that answer new or unexplored questionsGives AI concrete evidence to support claimsCase studiesReal-world examples showing how something works in practiceHelps AI justify recommendations with proofThought leadershipOpinion-led content with unique insights or perspectivesAdds depth and diversity to AI-generated answersNews contentTimely, accurate coverage of recent developmentsFills gaps where training data falls short 2. Build topical authority (clusters) AI models don’t just evaluate individual pages; they evaluate how consistently you cover a topic. If you publish multiple pieces on a specific subject, each addressing different aspects, you signal depth, expertise, and reliability. That’s what topical authority is all about. And this is where E-E-A-T naturally comes into play. The more consistently you demonstrate experience and expertise in a niche, the more likely your content is to be trusted and cited. What to do in practice: Create clusters around a core topic (pillar page/cornerstone content + supporting content) Cover both broad and specific questions in your niche Go beyond basic answers, add expert insights, examples, or real-world context Keep your messaging and terminology consistent across content 3. Strengthen entity signals (brand, authorship, schema) AI systems evaluate content, but they also evaluate who is behind it. Strong entity signals help models understand your brand, your authors, and your credibility within a topic. The clearer these signals are, the easier it is for AI to trust and cite your content. What to do in practice: Build clear author profiles with expertise and credentials Maintain consistent brand mentions across your site and the web Use structured data (schema) to define authors, organizations, and content relationships Ensure your “About” and author pages clearly establish credibility 4. Earn external validation signals across the web AI models don’t rely on a single source of truth. They validate information by cross-referencing multiple sources across the web. That means your credibility isn’t built only on your website. It’s shaped by how consistently your brand shows up across trusted platforms. The more aligned and authoritative those signals are, the easier it is for AI systems to trust and cite your content. Think of this as building a web-wide validation layer that reinforces your brand through multiple independent sources. This is also where traditional SEO practices like link building evolve. It’s no longer just about backlinks, but about earning consistent, high-quality mentions that strengthen your entity across the web. What to do in practice: Contribute insights to reputable publications in your niche Earn consistent mentions across industry blogs, directories, and review platforms Build high-quality backlinks through a strategic link-building approach Be active in communities like Reddit, Quora, or niche forums Run digital PR campaigns that reinforce your brand narrative across sources 5. Keep content fresh and updated AI models prefer content that reflects current information. Outdated content is less likely to be trusted, especially for topics that evolve quickly. Regular updates signal that your content is still relevant and reliable. What to do in practice: Refresh key articles with updated data, examples, and insights Add new sections instead of rewriting from scratch where possible Clearly indicate updates (timestamps, revised sections) Prioritize high-performing or high-potential pages for updates Must read: How to optimize content for AI LLM comprehension using Yoast’s tools 6. Structure content for answer extraction AI models don’t read content the way humans do. They extract answers. Most AI-generated responses are built by identifying clear, concise answer blocks within content. And increasingly, users prefer this format. In fact, according to a poll by IWAI, 67% of users find AI tools more efficient than traditional search for getting answers. That shift makes one thing clear: if your content doesn’t directly answer questions, it’s less likely to be surfaced or cited. This means it’s not enough to include answers. You need to structure your content so those answers are easy to find, interpret, and reuse. What to do in practice: Lead sections with direct, concise answers before expanding Use headings that mirror real user queries and intent Break down complex topics into scannable, extractable sections Add summaries, definitions, or key takeaways at the start of sections Anticipate follow-up questions and answer them within the same content Tracking AI brand presence with Yoast By now, we know what AI citations are, how they work, and how to earn them. But here’s the real question: how do you know if you’re already being cited? And if not, how do you understand where your competitors are showing up and where you’re missing out? That’s the gap Yoast AI Brand Insights is built to solve. As AI-generated answers become a key discovery layer, most traditional analytics tools fall short. They can tell you about traffic, but not whether your brand is being mentioned, how it’s being perceived, or which sources AI systems trust when referencing you. That’s a critical blind spot, especially as AI answers increasingly shape user decisions before a click even occurs. Yoast AI Brand Insights helps you track and understand your AI visibility, citations, and brand mentions across platforms like ChatGPT, Gemini, and Perplexity, so you can move from guesswork to informed action. Here’s what it enables you to do: Sentiment tracking Understand how your brand is being perceived in AI-generated answers. The tool analyzes keywords associated with your brand and shows whether the overall sentiment is positive or negative, helping you spot tone issues and shifts over time. Citation analysis (brand mentions) See when and where your brand is being cited. More importantly, understand which sources AI platforms reference alongside your brand, so you can identify citation gaps and opportunities to improve your presence. Competitor benchmarking AI visibility is relative. This feature lets you compare your brand’s citations, mentions, and sentiment against competitors, helping you understand who is being surfaced more often and why. Question monitoring AI search is driven by queries. With question monitoring, you can track specific brand-related or industry questions and see whether your brand appears in the answers, giving you direct insight into where you’re visible and where you’re missing. AI visibility index Instead of looking at isolated metrics, Yoast combines signals like citations, mentions, sentiment, and rankings into a single visibility score. This gives you a clearer picture of how your brand performs across AI systems over time. The bigger picture here is simple: Yoast AI Brand Insights helps you understand your position in this new ecosystem, so you can strengthen your presence, close gaps, and ensure your brand is part of the answers your audience is already consuming. FAQs on AI citations AI citations can feel complex at first, especially as search continues to evolve. Here are answers to some of the most common questions to help you navigate them better. Are backlinks different from AI citations? Yes, they serve different purposes. Backlinks help your pages rank in traditional search, while AI citations determine whether your content gets included in AI-generated answers. In short, backlinks drive visibility on SERPs, while citations drive visibility within answers. If you want a deeper breakdown, check out this guide on AI citations vs backlinks. Do AI systems always provide citations? No, AI systems don’t always include citations. When responses are generated purely from pre-trained knowledge rather than retrieved sources, citations may not appear. To test this, I tried the following prompts on ChatGPT: Out of these, citations appeared in about half of the responses. A clear pattern emerged: Queries involving products, recommendations, statistics, or recent events were more likely to trigger citations Queries focused on definitions or general knowledge often did not include citations This shows that citation behavior depends heavily on the query type, intent, and context. Not every answer requires a source, but the more specific or evidence-driven the query, the more likely citations are to appear. How do I direct AI models to the most important content on my website? You can’t directly control what AI models choose to cite, but you can make it easier for them to understand and prioritize your content. One effective way to do this is by using llms.txt, a feature in Yoast SEO. It creates a structured, LLM-friendly markdown file that highlights your most important pages, helping LLMs better understand your site when generating answers. A smarter analysis in Yoast SEO PremiumYoast SEO Premium has a smart content analysis that helps you take your content to the next level! Get Yoast SEO Premium »Only $118.80 / year (ex VAT) Think of it as a way to clearly communicate which content matters most, so when AI systems look for reliable sources, your key pages are easier to interpret and surface. AI citations: The currency of the AI-driven web AI citations are changing how users discover and trust information. They don’t just complement rankings; they reshape them by deciding which sources become part of the answer itself. In many cases, users no longer need to click to explore. If your content is cited, you’re visible. If not, you’re invisible. This shift also changes what we optimize for. It’s no longer just about traffic; it’s about trust, relevance, and inclusion in the answer layer. As we explored in our recent read, Rethinking SEO in the age of AI, the central question for SEO is evolving. It’s no longer just, “Can Google find my website?” It’s now, “Does the AI have a reason to remember my brand?” The post AI citations explained: how they work and how to get them appeared first on Yoast. View the full article

-

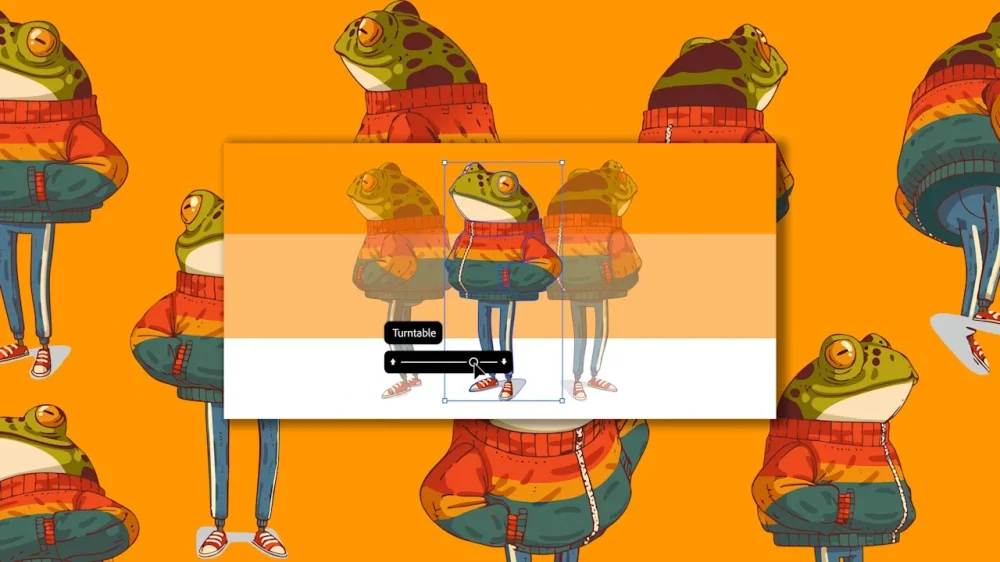

Embrace Filming on Analog Video in 2026