All Activity

- Past hour

-

US economy sheds 92,000 jobs in February in sharp slide

Figure far below expectations comes as doubts persist over labour market strengthView the full article

-

‘Always be testing’ worked in 2016 — it’s risky in 2026

If I hear “always be testing” one more time, I might scream. It was great advice in 2016. In 2026, it’s a great way to light your budget on fire. That mantra made sense when budgets were loose and platforms forgave a lot of chaos. Launch five audience tests simultaneously? Sure, why not! Swap out three creative variables at once? Go for it! But the rules have changed. Our new reality has tighter budgets, longer learning phases, and signal fragmentation everywhere. One poorly structured test can distort your performance for weeks, not days. That performance hit compounds fast. Modern experimentation is expensive and risky. Why pay that price when we have the power of agentic AI to help? And by help, I don’t mean slapping AI onto our existing process and asking it to generate more ad variants. That would just be an expedient way to light our budgets on fire. Instead, it’s time to use agentic AI to design smarter experimentation systems. The real cost of unstructured testing In an “always be testing” era, it was all too easy to throw things to test at the scale Oprah gives out cars or Taylor Swift fills auditoriums. It often led to unstructured testing where we launched ideas on a Monday and checked results on Friday hoping for a lift. There was nary a risk model, overlap detection, or strategic sequencing in sight. The costs of that approach are now exponentially higher. Take platform disruption. Algorithms crave stability. Industry benchmarks show ad sets stuck in learning phases often see CPAs 20-40% higher than stable sets. Every time you significantly change creative, audience, or budget, you risk resetting that learning. If you’re running three overlapping tests that each trigger resets, you’re voluntarily paying a volatility tax on your entire media spend. Then there’s waste. The majority of A/B tests deliver no statistically significant lift. If you aren’t ruthless about what deserves to run, you’re burning budget to prove most ideas don’t matter. “Always be testing” without guardrails turns into “always be destabilizing.” Your customers search everywhere. Make sure your brand shows up. The SEO toolkit you know, plus the AI visibility data you need. Start Free Trial Get started with From random tests to a real experimentation engine The shift looks like this. Old approach: “AI, write me 10 new headlines.” New approach: “AI, design the smartest next experiment within our budget, risk tolerance, and current learning state.” The reframe from creative generation to experimentation architecture is where real leverage lives. Here’s a practical seven-step framework to turn testing from a tactical habit into strategic infrastructure. Step 1: Set hard guardrails (humans draw the lines) Before you let any AI near your experiments, lock in constraints. Without them, AI lacks proper context. With them, AI becomes a disciplined strategic partner. Define and document five hard boundaries. Budget allocation: Reserve a fixed percentage (e.g., 10%) explicitly for testing. Maximum volatility: “No test can increase CPA by more than 15% for more than 5 days.” Learning phase sensitivity: Document reset thresholds per platform. Leading indicators: Use early signals (CTR, engagement drop-offs) to kill bad tests before they damage pipeline. Brand risk: Define off-limits positioning (e.g., no discount-heavy testing in enterprise segments). Document this in a single file (e.g., experimentation-guardrails.md) to teach AI the constraints that make ideas viable. Your AI agent must reference this before proposing any test. Step 2: Let AI audit your experiment history Most teams have the data sitting in spreadsheets, but never extract the lessons. Feed your last six months of test results into an AI agent and have it analyze variables changed, duration, performance delta, statistical confidence, and platform resets. Ask it to find patterns, such as: Over-tested variables: CTA buttons tested eight times with zero meaningful lift? That’s not a lever. False failures: Many tests are declared losers simply because they never reached statistical significance. An AI agent can quickly assess statistical power and flag inconclusive results. Volatility patterns: Often, your worst CPA weeks weren’t market shifts or a single bad creative, but rather the weeks where you launched three overlapping tests. This is how AI becomes a true analytical partner. Step 3: Write real hypotheses Rather than jumping straight from idea to launch, use AI to help you enforce hypothesis discipline. Weak: “Let’s test a new headline.” Strong: “If we emphasize ‘faster time-to-value’ over ‘ease of use,’ we expect a 10-5% lift in demo requests from mid-market companies because win/loss analysis shows speed is their top decision criterion.” Structured hypotheses create institutional memory. Six months later, when someone suggests testing “speed messaging” again, you’ll know exactly who it worked for and why. Yes, it feels like paperwork, but this discipline can protect your budget from algorithm chaos. Step 4: Risk-score every proposed test Budget isn’t infinite and neither is algorithm stability. Your AI agent should evaluate each proposed test across five dimensions and assign a risk score. Budget impact (e.g., <5% vs >15%). Algorithm disruption level (minor refresh vs new campaign). Audience overlap. Brand sensitivity. Learning value. High risk + low learning = Kill it. Low risk + high insight = Green light. Example: Testing a radical new enterprise positioning statement is high risk in a paid conversion campaign. Instead, your AI agent might suggest validating it first via organic LinkedIn content or low-budget audience polling. Low risk. High signal. Get the newsletter search marketers rely on. See terms. Step 5: Pre-test with synthetic audiences This is one of the most underused applications of AI in experimentation. Synthetic testing means simulating how different personas may react to messaging before spending media dollars, and the data backs it up. A study involving researchers from Stanford and Google DeepMind found that digital agents trained on interview data matched human survey responses with 85% accuracy and mimicked social behavior with 98% correlation. This makes synthetic audiences surprisingly useful for early-stage signal gathering. While they don’t replace real-world data (at least not yet), they can act as creative QA. Here’s how it works. Define psychographic archetypes. The Skeptical CMO (burned by vendors, risk-sensitive). The Growth VP (speed-obsessed). The CFO (margin-focused). Feed your proposed messaging into your AI system and ask, “How would the Skeptical CMO react to this?” You might get feedback like: “The phrase ‘All-in-One’ triggers skepticism. It signals feature bloat. Consider reframing as ‘Integrated’ or ‘Modular.’” That kind of signal costs pennies in API calls instead of thousands in paid testing. Step 6: Sequence tests, don’t stack them Changing audience, creative, and landing page in the same week teaches you almost nothing. Your AI agent should act like air traffic control: scan active campaigns, flag conflicts, and recommend sequencing. A better flow: Week 1-2: Audience test. Week 3-4: Creative test on the winning audience. If overlap is unavoidable, enforce clean holdout groups so you always have a source of truth. Step 7: Build a living knowledge base Treat tests like disposable experiments and you lose the compounding value. Have your AI auto-summarize every completed test: Why did it win? Who did it win with? How durable was the lift? What variables interacted? Over time, this database becomes your moat. Everyone can buy the same targeting. Few teams have 100+ validated customer truths at their fingertips. See the complete picture of your search visibility. Track, optimize, and win in Google and AI search from one platform. Start Free Trial Get started with The bigger shift: From activity to architecture “Always be testing” was a growth-era mindset. In 2026, the winning mindset is “always be compounding intelligence.” Rather than more tests, build your competitive advantage through structured, risk-aware, insight-driven experimentation that protects algorithm stability and ties experimentation directly to revenue. The next time your stakeholder asks why you aren’t testing more, show them your experimentation architecture and say, “We’re not just running experiments. We’re building an intelligence engine.” Because intelligence compounds. View the full article

-

Pentagon follows through with its threat, labels Anthropic a supply chain risk ‘effective immediately’

The The President administration is following through with its threat to designate artificial intelligence company Anthropic as a supply chain risk in an unprecedented move that could force other government contractors to stop using the AI chatbot Claude. The Pentagon said in a statement Thursday that it has “officially informed Anthropic leadership the company and its products are deemed a supply chain risk, effective immediately.” The decision appeared to shut down the opportunity for further negotiation with Anthropic, nearly a week after President Donald The President and Defense Secretary Pete Hegseth accused the company of endangering national security. The President and Hegseth announced a series of threatened punishments last Friday, on the eve of the Iran war, after Anthropic CEO Dario Amodei refused to back down over concerns the company’s products could be used for mass surveillance of Americans or autonomous weapons. Amodei said in a statement Thursday that “we do not believe this action is legally sound, and we see no choice but to challenge it in court.” The Pentagon statement said, “this has been about one fundamental principle: the military being able to use technology for all lawful purposes. The military will not allow a vendor to insert itself into the chain of command by restricting the lawful use of a critical capability and put our warfighters at risk.” Amodei countered that the narrow exceptions Anthropic sought to limit surveillance and autonomous weapons “relate to high-level usage areas, and not operational decision-making.” He said there were “productive conversations” with the Pentagon in recent days over whether it could keep using Claude or establish a “smooth transition” if no agreement was reached. The President gave the military six months to phase out Claude, which is already widely embedded in military and national security platforms. Amodei said it’s a priority to make sure warfighters won’t be “deprived of important tools in the middle of major combat operations.” Some military contractors were already cutting ties with Anthropic, a rising star in the tech industry that sells Claude to a variety of businesses and government agencies. Lockheed Martin said it will “follow the President’s and the Department of War’s direction” and look to other providers of large language models. “We expect minimal impacts as Lockheed Martin is not dependent on any single LLM vendor for any portion of our work,” the company said. How the Defense Department will interpret the scope of the risk designation is unclear. Amodei said a notification Anthropic received from the Pentagon on Wednesday shows it only applies to Claude’s use by customers as a “direct part of” their military contracts. Microsoft said its lawyers studied the rule and the company “can continue to work with Anthropic on non-defense related projects.” Pentagon draws criticism for its decision The Pentagon’s decision to apply a rule designed to address supply threats posed by foreign adversaries was met with broad criticism. Federal codes have defined supply chain risk as a “risk that an adversary may sabotage, maliciously introduce unwanted function, or otherwise subvert” a system in order to disrupt, degrade or spy on it. U.S. Sen. Kirsten Gillibrand, a New York Democrat and member of the Senate Armed Services Committee and Senate Intelligence Committee, called it “a dangerous misuse of a tool meant to address adversary-controlled technology.” “This reckless action is shortsighted, self-destructive, and a gift to our adversaries,” she said in a written statement Thursday. Neil Chilson, a Republican former chief technologist for the Federal Trade Commission who now leads AI policy at the Abundance Institute, said the decision looks like “massive overreach that would hurt both the U.S. AI sector and the military’s ability to acquire the best technology for the U.S. warfighter.” Earlier in the day, a group of former defense and national security officials sent a letter to U.S. lawmakers expressing “serious concern” about the designation. “The use of this authority against a domestic American company is a profound departure from its intended purpose and sets a dangerous precedent,” said the letter from former officials and policy experts, including former CIA director Michael Hayden and retired Air Force, Army and Navy leaders. They added that such a designation is meant to “protect the United States from infiltration by foreign adversaries — from companies beholden to Beijing or Moscow, not from American innovators operating transparently under the rule of law. Applying this tool to penalize a U.S. firm for declining to remove safeguards against mass domestic surveillance and fully autonomous weapons is a category error with consequences that extend far beyond this dispute.” Anthropic sees boost in consumer downloads While losing big partnerships with defense contractors, Anthropic experienced a surge of consumer downloads over the past week due to people siding with its moral stance. More than a million people signed up for Claude each day this week, the company said, lifting it past OpenAI’s ChatGPT and Google’s Gemini as the top AI app in more than 20 countries in Apple’s app store. The dispute with the Pentagon has also further deepened Anthropic’s bitter rivalry with OpenAI that started when ex-OpenAI leaders, including Amodei, started Anthropic in 2021. Hours after the Pentagon punished Anthropic last Friday, OpenAI announced a deal to effectively replace Anthropic with ChatGPT in classified military environments. OpenAI said it sought similar protections against domestic surveillance and fully autonomous weapons but later had to amend its agreements, leading CEO Sam Altman to say he shouldn’t have rushed a deal that “looked opportunistic and sloppy.” Amodei also expressed regret about his own part in that “difficult day for the company,” saying Thursday he wanted to “directly apologize” for an internal note he sent to Anthropic staff that attacked OpenAI’s behavior and suggested Anthropic was being punished for not giving “dictator-like praise” to The President. —Matt O’Brien and Konstantin Toropin, Associated Press View the full article

-

Silent killer: return of submarine war and death by torpedo

The sinking of an Iranian ship near Sri Lanka, the first of its kind in decades, has sparked scrutiny about Washington’s tacticsView the full article

-

5 Fashion Nova Discount Codes You Can’t Miss on RetailMeNot

If you’re looking to save on your next Fashion Nova purchase, you should know about the top discount codes available on RetailMeNot. For instance, using the E25 code gives you $25 off orders over $100, whereas the FNFAST code offers 30% off purchases exceeding the same threshold. New customers can likewise benefit from an exclusive 10% off, together with various seasonal promotions. Curious about additional codes that can maximize your savings? Key Takeaways Use code E25 for $25 off purchases over $100, and promo code EUP for 10% off smaller orders. New customers can enjoy exclusive discounts of up to 50% with minimum purchase requirements. Black Friday offers up to 50% off with the code FNBLACK50, including clearance items. RetailMeNot regularly updates discount codes, so check for the latest offers and savings. Combine discount codes with free shipping on orders over $75 for additional savings. 25 Off Your Order If you’re looking to save on your Fashion Nova order, there are several discount codes available that can help you reduce your total. One option is the Fashion Nova discount code RetailMeNot provides, which often features considerable deals. For example, you can use code E25 to get $25 off orders of $100 or more, making it a great choice for larger purchases. If you’re making a smaller order, consider the promo code EUP, which gives you 10% off your purchase. Furthermore, keep an eye out for seasonal promotions that can offer discounts of up to 50%, particularly during major events like Black Friday and Cyber Monday. By checking RetailMeNot regularly, you can find exclusive deals not available elsewhere. Don’t forget, combining these codes with ongoing promotions, such as free shipping on orders over $75, can greatly improve your savings. 30% Off Sitewide Fashion Nova frequently offers sitewide discounts that can make shopping more affordable for everyone. One popular option is a 25% off discount code for sitewide purchases, which requires a minimum order of $99. If you’re a frequent shopper, you can take advantage of a 30% off discount code, FNAC, valid on purchases over $100, allowing you to save even more on larger orders. New customers should likewise check for a 10% off promo code to improve their initial shopping experience. It’s important to note that RetailMeNot regularly updates Fashion Nova discount codes, ensuring you have access to the latest verified promo codes. Although these codes can provide significant savings, keep in mind that only one discount code can be applied per order. Thus, choose the most advantageous option to maximize your savings during shopping at Fashion Nova. Up to 50% Off Black Friday Deals As the holiday shopping season approaches, shoppers can take advantage of up to 50% off during Fashion Nova’s Black Friday deals, which presents a prime opportunity to save on a wide range of styles. This year’s promotions cover various items, ensuring significant discounts applied directly to prices across the store. You can boost your savings by using popular discount codes like FNBLACK50, which maximize your overall discounts during these events. Additionally, clearance items are included in the Black Friday sale, allowing for deeper savings by combining clearance prices with the Black Friday discounts. This means you could find exceptional deals where the total savings exceed $100 on larger orders. Don’t forget to utilize a promo code extension to track and apply your discounts seamlessly, ensuring you get the best possible price on your holiday purchases. Take advantage of these offers to refresh your wardrobe affordably this season. Free Shipping on Orders Over $75 After taking advantage of significant savings during the Black Friday deals, shoppers can further improve their experience with Fashion Nova’s free shipping offer on orders over $75. This promotion guarantees you save on delivery costs during enjoying your new styles. Free standard shipping typically delivers your items within 3-7 business days, making it a convenient choice for online shopping. Order Amount Free Shipping Available Notes Over $75 (USA) Yes Standard shipping only Over CAD $105 (Canada) Yes International orders Under $75 No Shipping fees apply Oversize Items No Check product specifications Combine Offers Yes Use with discount codes 10% Off for New Customers New customers can access significant savings at Fashion Nova with exclusive discount codes that offer up to 50% off your first purchase during special promotional events. To maximize your savings, regularly check the RetailMeNot mobile app for updated coupon codes customized particularly for new customers. These offers can change frequently, so staying informed is key. Typically, promotions may require a minimum purchase amount, often around $100, to release discounts like 30% off. Furthermore, Fashion Nova often provides a special welcome offer for new customers who sign up for their newsletter, which can include even more savings. Don’t forget to keep an eye on seasonal sales events, as new customer discounts are frequently highlighted during major sales like Black Friday and Cyber Monday. Frequently Asked Questions What Are Some Discount Codes for Fashion Nova? For Fashion Nova, you can use various discount codes to save on your purchases. One popular option is FNFAST, offering 30% off orders over $100. There’s furthermore a code for 25% off storewide on purchases of $99 or more. If you’re a new customer, sign up for the newsletter to get 10% off your first order. What Is the SBM50 Promo Code? The SBM50 promo code offers you a 50% discount on eligible purchases at Fashion Nova. Typically available during major sales events, this code has certain conditions, such as minimum spend requirements. You can only use it once per order, as Fashion Nova doesn’t allow stacking codes. To make the most of this offer, keep an eye on promotional updates to guarantee you can apply the SBM50 code when shopping. Can You Stack Discount Codes on Fashion Nova? No, you can’t stack discount codes on Fashion Nova. When you apply a new promo code, it replaces any existing code in your cart. This means you should always use the best available code to maximize your savings. Since only one code can be applied per order, it’s important to stay informed about current promotions and their restrictions to guarantee you get the most value during checkout. What Is the Fashion Nova 40% off Coupon? The Fashion Nova 40% off coupon offers significant savings on eligible purchases. To use it, you’ll need to meet a specified minimum order amount, which is outlined in the promotion’s terms. Keep in mind that this coupon can only be used once per customer and can’t be combined with other discounts. For the latest availability, check the Fashion Nova website or subscribe to their newsletters for updates on current promotions. Conclusion To conclude, Fashion Nova offers various discount codes on RetailMeNot that can improve your shopping experience. Whether you’re looking for $25 off a $100 purchase with the E25 code or a 30% discount using FNFAST on larger orders, there are options for everyone. New customers can likewise take advantage of exclusive discounts. With free shipping on orders over $75 and seasonal promotions, you can save greatly on your fashion purchases as you enjoy a wide selection. Image via Google Gemini and ArtSmart This article, "5 Fashion Nova Discount Codes You Can’t Miss on RetailMeNot" was first published on Small Business Trends View the full article

-

This Premium ASUS OLED Gaming Monitor Is Over $100 Off Right Now

We may earn a commission from links on this page. Deal pricing and availability subject to change after time of publication. High-refresh-rate gaming monitors are getting faster every year, but a 480Hz OLED panel still feels like a technical flex—and the ASUS ROG Swift OLED PG27AQDP is one such example. This 27-inch OLED gaming monitor is currently $662.36 on Amazon, down from its usual $799 price, and price trackers show that’s the lowest it has dropped so far. It sits in a very small group of monitors built around a 1440p panel with a 480Hz refresh rate, competing with models like the Sony Inzone M10S. It is designed first and foremost for high-end PC gaming, where extremely fast frame rates can actually make use of a panel this quick. ASUS ROG Swift OLED Gaming Monitor $662.36 at Amazon $799.00 Save $136.64 Get Deal Get Deal $662.36 at Amazon $799.00 Save $136.64 A big part of the appeal here is the OLED panel paired with Micro Lens Array+ (MLA+) technology, which helps the screen get brighter than most OLED monitors. The difference shows up in games with strong lighting contrast. Dark scenes show the deep blacks OLED is known for, while bright elements like explosions or neon lights stand out more clearly than they do on many IPS displays. Motion also looks exceptionally clean. The 480Hz refresh rate and near-instant OLED response times make fast movement easier to track in shooters and competitive games. ASUS also includes features such as Extreme Low Motion Blur, OLED Anti-Flicker, and support for all major variable refresh rate formats, including AMD FreeSync and NVIDIA G-SYNC compatibility. Connectivity is up to date as well, with HDMI 2.1 ports that support modern consoles and GPUs. The performance is impressive, but the experience is not perfect. The hardware delivers exactly what competitive players want, yet the software side still feels rough around the edges. Some users report bugs where settings reset or behave unpredictably. There is also noticeable VRR flicker when frame rates change, and input lag increases when the monitor receives a 60Hz signal, which is something to keep in mind if you plan to use it for slower console games or everyday media. Still, for players chasing extremely high refresh rates and OLED contrast, this is among the most capable options available. Our Best Editor-Vetted Tech Deals Right Now Apple AirPods 4 Active Noise Cancelling Wireless Earbuds — $119.00 (List Price $179.00) Samsung Galaxy S26 Ultra, Unlocked Android Smartphone + $200 Gift Card, 512GB, Privacy Display, Galaxy AI, AI Camera, Super Fast Charging 3.0, Durable Battery, 2026, US 1 Year Warranty, Black — $1,299.99 (List Price $1,499.99) Samsung Galaxy Buds 4 AI Noise Cancelling Wireless Earbuds + $20 Amazon Gift Card — $179.99 (List Price $199.99) Google Pixel 10a 128GB 6.3" Unlocked Smartphone + $100 Gift Card — $499.00 (List Price $599.00) Apple iPad 11" 128GB A16 WiFi Tablet (Blue, 2025) — $329.00 (List Price $349.00) Apple Watch Series 11 [GPS 46mm] Smartwatch with Jet Black Aluminum Case with Black Sport Band - M/L. Sleep Score, Fitness Tracker, Health Monitoring, Always-On Display, Water Resistant — $329.00 (List Price $429.00) Amazon Fire TV Soundbar — $99.99 (List Price $119.99) Deals are selected by our commerce team View the full article

- Today

-

Tech and finance layoffs: Oracle, Block, Morgan Stanley, Capital One headline brutal week for job losses

The past week has been a brutal one for many working in the tech and financial industries. Thousands of jobs have been lost—or will be lost soon—from companies including Block, Morgan Stanley, Capital One, eBay, and, as reported today, software giant Oracle. Here’s what you need to know about the layoffs. Oracle to cut ‘thousands’ of jobs The most recent news of layoffs came yesterday, after Bloomberg reported that the database software giant Oracle Corporation (NYSE: ORCL) is planning to cut “thousands” of jobs as soon as this month. And yes, artificial intelligence is to blame—but not solely because AI is directly taking jobs. Instead, Oracle is reportedly planning job cuts to free up cash for its AI data center expansion, which the company is pursuing to compete with cloud computing giants Amazon and Microsoft. However, Bloomberg’s report noted that some of the jobs lost will be jobs “that the company expects it will need less of due to AI.” It is unknown exactly how many jobs will be lost, with Bloomberg noting that Oracle’s workforce reduction plans are “still active and could change.” Fast Company has reached out to Oracle for comment. As of May 2025, Oracle has around 162,000 employees. Capital One lays off over 1,100 workers On the same day of the Oracle job cuts report, financial giant Capital One (NYSE: COF) said that it was laying off more than 1,100 employees, according to CBS News. But these layoffs have nothing to do with AI. They follow Capital One’s acquisition of credit card giant Discover last year, which cost the company $50 billion. Shortly following that acquisition, 600 employees were laid off. Now, another 1,100 are expected to lose their jobs—primarily those who worked at the former Discover headquarters in Riverwoods, Illinois. A Capital One spokesperson confirmed the layoffs to CBS News, stating, “As part of our continued journey to integrate Discover with Capital One, we announced the difficult decision to eliminate some Discover associate roles across the organization.” Morgan Stanley eliminates 2,500 roles A day before the Capital One layoffs were reported, the Wall Street Journal reported that investment banking giant Morgan Stanley (NYSE: MS) was laying off around 2,500 workers, or about 3% of its roughly 83,000-strong workforce. The job cuts reportedly hit employees in three divisions: investment banking and trading, wealth management, and investment management, and are reportedly “tied to shifting business and location priorities,” according to the Journal, which cited anonymous sources. Fast Company reached out to Morgan Stanley for comment. Block layoffs decimated 4,000 jobs The most significant round of layoffs, however, came from Jack Dorsey’s Block (NYSE: XYZ). Last Friday, the fintech company cofounded by one of Twitter’s original cofounders announced sweeping job cuts totaling 4,000 positions. And Dorsey didn’t beat around the bush as to the reasons for the layoffs: AI. As Fast Company previously reported, Dorsey said his Block workforce was shrinking from 10,000 employees to just 6,000 due to the company’s increasing use of “intelligence tools,” which have allowed it to function with a “significantly smaller team.” “I don’t think we’re early to this realization. I think most companies are late,” Dorsey said in a memo published online. “Within the next year, I believe the majority of companies will reach the same conclusion and make similar structural changes. I’d rather get there honestly and on our own terms than be forced into it reactively.” eBay cuts 800 jobs The Block layoffs came just one day after legacy online shopping giant eBay (Nasdaq: EBAY) announced it was cutting about 6% of its workforce, or around 800 jobs. As Fast Company reported, the layoffs came about a week after eBay acquired the second-hand clothing app Depop from Etsy for $1.2 billion. “We are taking steps to reinvest across our business and align our structure with our strategic priorities, which will affect certain roles across our workforce,” an eBay spokesperson told Fast Company. “We are grateful for the contributions of the employees impacted and are committed to supporting them with care and respect.” Layoff announcements actually fell in February If there’s a silver lining at all to this, it’s that total layoffs appear to have fallen in February by a significant amount, according to a report from the outplacement firm Challenger, Gray & Christmas. The firm said that U.S.-based layoff announcements plunged by 55% in February, to 48,307, versus the month before. However, that dramatic fall is only as sharp as it is because January saw over 108,000 layoff announcements. For the month of February, the firm says the most jobs lost were in the technology sector, with about 11,000 jobs cut. Education came in second place, with around 5,400 jobs lost. Industrial Manufacturing came in third with about 4,100 jobs lost. Yet Challenger, Gray & Christmas cautions that the decline in job cuts might not last. “February’s dip is a nice reprieve from the elevated job cut plans to start the year,” the firm’s chief revenue officer, Andy Challenger, noted. “With U.S. involvement in a growing war in Iran, the end of Q1 may bring more layoff plans as companies tighten belts amid uncertainty and higher costs.” View the full article

-

Search News Buzz Video Recap: Google Heat Continues, AI Mode Recipe Link Cards, ChatGPT Web Search With Fewer Links & AI-Generated Search Landing Pages

This week...View the full article

-

AIO Citations Diverge From Rankings, Bing Rewrites Rules – SEO Pulse via @sejournal, @MattGSouthern

In SEO Pulse: AI Overview citations drift further from traditional rankings as AI search expands and platforms clarify how content appears in AI answers. The post AIO Citations Diverge From Rankings, Bing Rewrites Rules – SEO Pulse appeared first on Search Engine Journal. View the full article

-

Why most video ads fail — and what video metrics actually matter

Video advertising has never been easier to distribute. Platforms can deliver impressions and views at an enormous scale across YouTube, paid social, short-form video, and connected TV. But distribution isn’t the same as effectiveness. Many campaigns generate impressive platform metrics while producing little measurable business impact. The problem usually isn’t targeting, budget, or platform choice. It’s a deeper strategic issue: campaigns are optimized for outputs like views and impressions rather than outcomes like attention, persuasion, and action. Most video ads fail because they misunderstand attention Poor targeting, limited budgets, and platform choice are rarely the real problem. The bigger issue is that many video ads are still produced as if they’re television commercials. In the early days of online video, distribution was the challenge. Getting a video seen at all felt like a win. Today, distribution is abundant. Attention isn’t. Every major platform — YouTube, paid social, short-form video, connected TV — competes for fragments of cognitive bandwidth. Users arrive with intent, habits, and expectations that have nothing to do with your campaign. We plan for reach, while viewers respond to relevance. I’ve sat in many meetings where success was defined by impressions delivered or views accrued. But when you look downstream — search lift, site engagement, conversion — the connection often disappears. Platforms will reliably deliver impressions. Turning those impressions into memory, persuasion, or action requires a fundamentally different mindset. Dig deeper: From Video Action to Demand Gen: What’s new in YouTube Ads and how to win Your customers search everywhere. Make sure your brand shows up. The SEO toolkit you know, plus the AI visibility data you need. Start Free Trial Get started with The first five seconds are the entire negotiation Skippable formats changed video advertising permanently, but many advertisers still haven’t adjusted creatively. Early in my career, I believed strongly in branding up front. Logos, product shots, music cues — everything that signaled professionalism. Those ads looked great in presentations. They underperformed in market. A clear pattern emerged over time. Ads that opened with a recognizable problem, a provocative statement, or an unexpected visual held attention longer — even when branding appeared later. Ads that opened with branding signals were skipped almost reflexively. View-through rate isn’t persuasion. A “view” simply means the platform’s minimum threshold was met. It doesn’t mean the message landed, the brand registered, or the viewer cared. In multiple brand lift analyses, most measurable impact occurred before the skip button appeared. If the opening didn’t earn attention, the rest of the ad didn’t matter. What works: treat the opening frame like a headline, not a preamble. Lead with tension, a question, or a familiar problem. Design for sound-off environments. If the first frame wouldn’t stop a scroll, nothing that follows will matter. Higher production value often correlates with lower performance One of the most counterintuitive lessons in modern video advertising: polished ads frequently underperform scrappier ones. I’ve seen simple, phone-shot videos outperform meticulously produced studio spots across YouTube, paid social, and short-form platforms. Not because quality doesn’t matter — but because perceived authenticity matters more. Audiences are exceptionally good at identifying advertising. When something looks like an ad, they disengage. When it looks like content, they give it a chance. Algorithms reinforce this: they reward watch time, retention, rewatches, and shares. They do not reward lighting setups or production budgets. I’ve seen brands “upgrade” social video to look more premium, only to watch performance decline. The creative looked better. The results were worse. The goal isn’t to look amateurish. It’s to look like you belong. Match the platform’s visual grammar. Prioritize clarity over polish. Use real people and authentic voices whenever possible. Ads that feel native get watched. Ads that feel inserted get skipped. Dig deeper: How to get better results from Meta ads with vertical video formats Get the newsletter search marketers rely on. See terms. Length is a creative decision, not a media constraint “Shorter is better” is one of the most persistent — and misleading — rules in video advertising. Six-second ads can work. So can 60-second ads. I’ve seen both exceed expectations, and I’ve seen both fail badly. The difference was never duration — it was justification. Some messages can be delivered instantly. Others require context, proof, or emotional buildup. Forcing every idea into the same runtime produces predictable results: safe, bland, forgettable ads. I’ve reviewed retention graphs where a 45-second ad held viewers longer than a 15-second version, because the story justified its length. I’ve also seen six-second ads lose half their audience in the first two seconds because they wasted the opening. Test multiple edits, not just multiple lengths. Watch retention curves, not averages. Build modular narratives: hook, then value, then proof, then action. The “right” length is however long it takes to make the viewer feel their time was respected. Metrics are signals Platforms provide more data than ever. The problem isn’t a lack of metrics. It’s confusing metrics with outcomes. I’ve seen campaigns praised for high completion rates that produced no measurable business impact. Strong engagement coexisting with low conversion. Impressive view counts that delivered zero lift. This happens because platforms optimize for their success metrics, not yours. If your goal is to maximize views, the platform can do that easily. If your goal is to influence consideration, preference, or action, things get more complicated. One uncomfortable question I’ve learned to ask early: what would failure look like here? If the answer is vague, the campaign is already at risk. Define success in business terms before launch. Tie video metrics to downstream behavior wherever possible. Use lift studies, holdouts, or assisted conversions when they’re available. If you’re running a brand-building campaign, measure brand lift. If you’re running a performance campaign, measure conversions. Dig deeper: AI for video advertising: 5 best practices for PPC campaigns The brief is usually where things go wrong Creative is often blamed when video ads underperform. In reality, creative usually does exactly what it was asked to do. The problem is the brief. Vague objectives produce generic ads. “Brand awareness” without context leads to unfocused messaging. “Make it engaging” isn’t a strategy. Strong video ads almost always begin with clear answers to three questions: Who is this really for? What do they care about right now? What should they think, feel, or do differently after watching? When those answers are clear, creative decisions become easier. When they aren’t, the work is compromised before production begins. The deeper diagnostic questions are worth keeping close: Are viewers actually paying attention, or just passively present? What are they feeling — and which specific creative choices are driving that response? Will they remember the brand once the ad ends? What will they do next — share it, recommend it, search for the product, or buy? I’ve seen entire campaigns improve simply because the brief forced alignment around audience insight rather than assumptions. Distribution strategy is part of the creative Another common mistake is treating creative and distribution as separate decisions. They aren’t. The way an ad is consumed — fullscreen versus feed, sound-on versus sound-off, lean-back versus lean-forward — should shape how it’s made. A video designed for connected TV shouldn’t simply be resized for mobile. A short-form ad shouldn’t be a truncated long-form story without rethinking the hook entirely. I’ve seen strong ideas underperform because the creative didn’t match the placement. The concept wasn’t wrong. The context was. Design with placement in mind from the start. Create platform-specific versions, not one-size-fits-all assets. Accept that “reuse” often means “rethink,” not “repurpose.” Distribution constraints aren’t limitations — they’re creative inputs. Dig deeper: How to dominate video-driven SERPs Testing should answer questions, not just generate variants Testing is indispensable. It’s also frequently misunderstood. Running endless A/B tests without a hypothesis rarely produces insight. It produces noise. The most effective testing focuses on variables that materially affect attention and comprehension: opening frames, narrative structure, on-screen text versus voiceover, proof points versus emotional appeals. It’s also important to recognize what testing can’t do. Algorithms are excellent at optimizing toward measurable signals. They don’t understand brand equity, long-term memory, or cumulative effect. Testing should inform judgment — not replace it. Ultimately, the only thing that matters for creative effectiveness tools is whether their predictions actually correlate to real media and sales outcomes — reliably enough to inform strategy and media decisions. The question worth asking of any such tool is simple: How often does what it predicts will happen actually happen? For example, I frequently cite data from DAIVID, an AI-driven creative effectiveness platform. Why? Because in independent testing, DAIVID’s predictions aligned with real-world outcomes more than 80% of the time — a meaningful foundation for making creative decisions with greater confidence before a campaign goes live. See the complete picture of your search visibility. Track, optimize, and win in Google and AI search from one platform. Start Free Trial Get started with Optimize for people Platforms will change. Formats will evolve. Algorithms will shift in opaque and sometimes frustrating ways. But attention, curiosity, and trust remain stubbornly human. The best video ads I’ve worked on weren’t optimized for view counts or completion rates. They were optimized for relevance. They respected the viewer’s time. They said something worth hearing. Video ads don’t succeed because they follow platform rules. They succeed because they understand people. And that principle outlasts every algorithm update. View the full article

-

Google: Most Sites Don't Need To Disavow Links But That's Not All Sites

Google's John Mueller again spoke about the disavow link file. This time he said that while "most sites don't need it, he added but "that's not all sites." Some sites may indeed need to disavow links.View the full article

-

Bing Search Tests Go To Shopping Button

Microsoft is testing a "Go to Shopping" button within the Bing Search results. This is instead of the narrower shopping section that shows shopping results by just says "see all."View the full article

-

Bing With Asian Owned Labels On Microsoft Ads

A few years ago, Microsoft Advertising the support of Asian owned labels and attributes on its search ads within Bing. Google has a similar attribute, by the way. Honestly, I've never seen the label on Bing, until now.View the full article

-

Local governments could deploy AI for good. Here’s how

When considering AI’s impact in cities, many residents and government officials envision a dark future of unbridled surveillance, hollowed-out city halls and unaccountable bots calling the shots based on biased training data. We, on the other hand, embrace a much more optimistic vision. With ambitious local leadership, AI, and especially the coming wave of agentic AI, can offer a profound opportunity not only to make government services more efficient but also to transform how cities fulfill their end of the social contract. As long-time public servants and champions of government innovation at our respective universities, we understand the challenges local governments face, including tight budgets, aging infrastructure and dissatisfied residents accustomed to the speed of Amazon and personalization of Spotify. Most cities still run on a century-old operating system built on bureaucracy, paper files, agency silos and rigid hierarchy. Agentic AI offers a unique opportunity to redesign how cities work, a model we call the “Agentic City.” Agents, city employees, and citizens working together Imagine a city administration where the complexity of navigating government bureaucracy is offloaded to intelligent agents—so routine tasks happen flawlessly, and even complex ones feel simple. A mother reports a broken sidewalk near her child’s school, snaps a photo, and sends it to the city. An AI agent classifies the problem, routes it to the right crew, tracks progress across agencies, proactively updates her until the work is done, and alerts others to similar risks nearby. Imagine a city that fixes pavement cracks before they become potholes, changes street lamps before they burn out, and repairs water lines before they leak. Yet even these dramatic improvements will only constitute steps in a transformation. These tools help reform-minded mayors adopt a system approach that sidesteps the strong headwinds often confronting business reengineering, including efforts to integrate agency functions or disparate data systems. Transportation officials no longer need to tweak signals; an AI traffic agent can balance safety, travel time and emissions. An Agentic City will be one in which agents, public employees and residents work together. Ultimately, all city services will be personalized as residents use an “agentic front door” to state their goals (“want to open a barber shop at 10th and Main”). Agents will walk users through the process or even complete those tasks for them. At the same time, a human monitors the results, troubleshoots and takes on difficult or unusual cases. In fact, this city offers preemptive housing vouchers, rental assistance, and property tax relief to those who qualify, obviating the application maze entirely. A systemic approach Getting there will require strong leadership to overcome gaps in imagination, skill deficits, and employee anxiety, compounded by the complexity of ensuring that AI changes comply with democratic values. Local leaders will need to take a systematic approach, crafting a powerful narrative of the service benefits while using their political and legal skills to negotiate with the city council, union, and employee leaders. AI-driven transformation requires a leadership team supported by academic and other local experts who understand the city’s technical capacity, legal and data limitations, and that stretches the imagination of a bureaucracy accustomed to existing processes. That team should establish a pathway for opportunities for both employees and residents, including the agentic front door, repetitive functions that can be outsourced to AI, and more time for staff to take on higher-value purposes: investigating root causes, engaging communities, and exercising judgment. Third, the leadership team should promote the incorporation of agentic capabilities that help employees identify patterns and causes of recurring problems by making data more easily accessible. Municipal workforces, both union and nonunion, represent a key stakeholder. Mayors need to be clear that AI will complement, not replace, the workforce. An Agentic City initiative would include outreach to labor to set the parameters of a new bargain in which workers, armed with data insights, increase productivity and share in the benefits through pay increases. Data literacy training and a data governance framework should also be essential components. Freed of repetitive tasks, public employees can focus on higher-value work. The data foundation Addressing these concerns responsibly begins with the system’s foundation: the data. Cities must invest in data pipelines that are not merely machine-readable but machine-understandable—structured with rich metadata, shared ontologies, and business-logic context—so that both humans and AI agents can interpret meaning, constraints, and appropriate use. Emerging approaches such as Model Context Protocols (MCPs), which standardize how AI systems access structured data and operational tools, represent a promising step in this direction by helping agents understand not only what data exists but also how it should be used. An agent that can “see” a permit record but not understand the regulatory framework, eligibility rules, or data quality limitations behind it will act inconsistently and require constant human correction. Machine-understandable data reduces that friction and makes agentic systems more reliable, transparent, and scalable. In short, the foundation of an Agentic City is not just smarter algorithms, but smarter data architecture. Implementing an agentic city hall presents substantial challenges. However, now is the time to lead, as mayors cannot afford to maintain the status quo or wait for the AI tsunami. Going forward presents challenges as well. Doing nothing poses a greater risk than getting started, and the evidence will be a city that, through more meaningful work for its employees, becomes more responsive to its residents. View the full article

-

Google Local Service Ads Won't Credit Calls For Existing Clients (Not Lead)

Google Local Service Ads can be super expensive; each call or click can cost hundreds of dollars. Which is why Google has generally been good about refunding for mistaken leads or issues with those leads. View the full article

-

Global bonds slump as Iran war upsets rate-cut bets

Debt markets head for worst week in more than a year after energy price surge sparks inflation fearsView the full article

-

Google Ads Customer Match Data Uploads Changes Coming April 1

Google sent out emails to some advertisers about changes coming to the Customer Match uploads in the Google Ads APl. After April 1, 2026, those data uploads are no longer going to work in the Google Ads API and must be done in the Data Manager API instead.View the full article

-

New York lawmakers want AI chatbots to stop pretending to be doctors or lawyers

New York is the latest state to consider a bill that would prohibit AI chatbots from dispensing advice that licensed professionals would normally give, such as medical or legal advice. The bill would also allow people who believe they were harmed by such advice to sue the operator of the chatbot. Senate Bill S7263, introduced by Democratic state Senator Kristen Gonzalez, passed out of a technology committee on a 6–0 vote last week and now advances to a reading on the floor of the Senate. Interestingly, the bill requires operators to clearly label their chatbots as AI, but stipulates that such a label isn’t enough to shield them from lawsuits under the statute. The proposal reflects a growing shift in how policymakers are thinking about AI. While early efforts focused mostly on transparency, lawmakers are beginning to explore something arguably more consequential: whether companies should be legally liable when AI systems give advice that causes real-world harm. The bill applies to chatbots that give advice in the fields of medicine, law, dentistry, veterinary medicine, physical therapy, pharmacy, nursing, podiatry, optometry, engineering, land surveying, geology, architecture, psychology, and social work. New York isn’t acting alone. Other states have passed or are considering similar laws, though with varying scopes and enforcement methods, and they tend to focus primarily on healthcare: California’s AB 489, enacted in 2025, does something similar but with a narrower scope, targeting AI systems that misrepresent their information as coming from licensed healthcare professionals. But AB 489 relies on state healthcare boards for enforcement and doesn’t provide a private right of action (civil suit) for legal recourse. A new Nevada law, AB 406, which went into effect last July, prohibits the advertising and operation of AI systems designed to dispense professional mental and behavioral healthcare therapy. The law also limits how licensed professionals can use AI in their practices. Last August, Illinois passed HB 1806, which prohibits licensed therapists in the state from using AI to make treatment decisions or communicate with clients. The law also prohibits tech companies from advertising or offering AI-powered therapy services in the state without the involvement of a licensed professional. Utah passed a similar law, HB 452, that puts restrictions and disclosure requirements on chatbots that appear to offer an alternative to human mental health therapy or advice. The law went into effect in 2025. Professional medical groups have also begun weighing in on the risks. The American Medical Association doesn’t call for a ban on AI chatbots dispensing health information, but it worries that consumer advice from LLMs might be false or misleading. “Notably, tools such as ChatGPT have shown a not-uncommon tendency to falsify references cited in response to these queries,” the AMA says in a policy paper, adding that AI tools have demonstrated the ability to generate fraudulent scientific or medical literature to support health advice. An especially sensitive area is mental health advice. Mental health advice is a particularly sensitive area, perhaps because many chatbot users, especially younger ones, use AI as a counselor or therapist. A 2025 JAMA Network study found that 13% of all respondents used chatbots for mental health advice, with 22% of those ages 18 to 21 doing so. AI companies have recognized a large and growing addressable market for AI mental health chatbots, in part because many consumers struggle to find an affordable therapist. For some consumers, the argument goes, accessing mental health advice from a chatbot is better than receiving none at all. The American Psychological Association says chatbots could actually be worse than nothing at all. That’s because of AI models’ tendency to relate to humans in a sycophantic way. The group said in a presentation to the Federal Trade Commission that “A.I. chatbots ‘masquerading’ as therapists, but programmed to reinforce, rather than challenge a user’s thinking, could drive vulnerable people to harm themselves or others.” Senator Gonzalez will likely have to explain why her S7263 bill targets chatbots as a source of legal or medical information but not traditional search engines. She might cite a 2025 experimental study showing that people “over-trust” AI. (She declined to comment for this story.) The researchers write that AI chatbots often sound more convincing and trustworthy than search engine results—even when they’re wrong, off-topic, or lacking context. The study also found that participants couldn’t reliably distinguish AI responses from those from real doctors, and often rated AI responses as more trustworthy and complete. Another study by Oxford and MLCommons involving nearly 1,300 participants found that using AI models to evaluate and analyze symptom scenarios led to health decisions (“should I take some aspirin? Should I go to the ER?”) that were no better than decisions based on personal knowledge or information gleaned from traditional internet search. The study also found that users often don’t know what details the AI needs to generate an answer, and that the AI’s outputs often blend correct and incorrect recommendations. The AMA says it would like to see the Federal Trade Commission regulate AI chatbots that dispense health information, but believes the agency currently lacks the resources to take on that role. Instead, it calls on the tech companies behind the chatbots to continually review the accuracy of their models and give users an easy way to report when a chatbot outputs inaccurate health information. View the full article

-

British strikes on targets in Iran would be lawful, says deputy prime minister

David Lammy says deploying RAF jets to protect British nationals and staff would be ‘entirely legal’View the full article

-

What Are Secondary Keywords? (And How to Use Them)

Secondary keywords are how you capture that extra traffic. They’re the supporting terms that help your page rank for more searches without creating separate content for each variation. In this guide, you’ll learn what secondary keywords are, how to find…Read more ›View the full article

-

AI Max Brand Controls Expand, VRC Non-Skip Ads Go Global – PPC Pulse via @sejournal, @brookeosmundson

AI copy guardrails and expanded YouTube non-skip video formats headline this week’s Google Ads updates in PPC Pulse. The post AI Max Brand Controls Expand, VRC Non-Skip Ads Go Global – PPC Pulse appeared first on Search Engine Journal. View the full article

-

Grocery Outlet is closing stores, joins growing list of retail chains shuttering locations in 2026

Grocery Outlet is joining the ranks of retailers planning to shutter storefronts this year. The discount grocery store chain announced its fourth-quarter and full 2025 fiscal year results on Wednesday, along with a plan to close 36 stores. This move to close stores follows a previous restructuring plan concluded in the second quarter of fiscal 2025, another attempt to “improve long-term profitability” and optimize growth. The company reported an increase in net sales, but an operating loss of $234.8 million and a net loss of $218.2 million in the fourth quarter of 2025. The optimization plan, which is expected to be largely completed during fiscal year 2026, is estimated to result in $14 million to $25 million in net total restructuring charges. This doesn’t include the company’s estimated gross profit loss due to sales discounts or product markdowns as the impacted locations close. At the same time, the fourth-quarter fiscal report noted seven store openings. Trade publication Grocery Drive reported that 24 of the closing stores are on the East Coast, and the company does not intend to slow its expansion, even as it experiences closures. The California-based chain ended the fourth quarter with 570 stores across 16 states, according to its earnings release. Shares of Grocery Outlet Holding Corp. (Nasdaq: GO) plummeted after its report. The stock closed down more than 27% on Thursday and is down more than 37% year to date. Fast Company reached out to Grocery Outlet for additional information on which stores will face closures and the expected impact on surrounding areas. Food access is a growing concern The ongoing trend of grocery store closures continues to raise concerns about food access. Kroger announced closures last year, sparking conversations in local communities about jobs and grocery deserts. Food deserts, or communities that are both low-income and lack a grocery store, are becoming more common across the United States. It’s an impact from the 1980s, when the government stopped enforcing the Robinson-Patman Act, an antitrust law that prohibited supplier price discrimination. Now millions of Americans live in food deserts. Organizations like the Institute for Local Self-Reliance (ILSR) map these food deserts, which can spread in response to these store closures. More than 280 Grocery Outlet stores are in California, where approximately 2.7 million people live in a food desert, according to ILSR data. View the full article

-

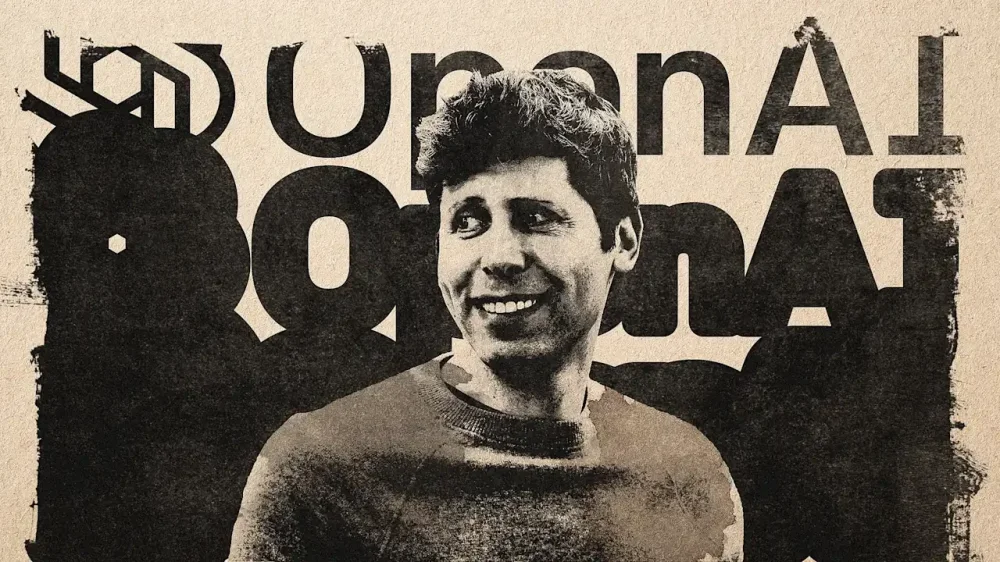

OpenAI just dragged its own brand

It sounds like a brag-worthy business coup: not just snagging a high-profile client, but doing so just after your chief rival’s deal with that same client unraveled in a brutally public way. But artificial intelligence pioneer OpenAI’s Pentagon deal didn’t end up being a brand-halo event. To the contrary, “it just looked opportunistic and sloppy”—and that’s the judgment of OpenAI’s CEO, Sam Altman. Given widespread concerns about the potential downsides of AI, ranging from mass layoffs to robot overlords, “opportunistic and sloppy” are just about the last attributes OpenAI wants to be associated with, perhaps especially in the context of a Department of War partnership. But this isn’t just an image headache; the brand backlash has included a surge of signups for the rival OpenAI seemed to have bested, Anthropic, whose Claude AI leapt past OpenAI’s ChatGPT to the top of the app charts. Some of that surge can be attributed to Anthropic’s behavior and rhetoric matching up to its brand image as a thoughtful steward of AI that’s mindful of its possible consequences. It’s a brand image that was tested recently when Anthropic wanted to add some caveats to the Pentagon’s desire to use its tech for “all legal purposes.” Anthropic’s Claude, then the only AI agent cleared for use in classified operations, had already been used to plan the recent military action against Venezuela (and was used in preparing for the attack on Iran.) But this evidently harmonious relationship snagged on Anthropic seeking guardrails that would prevent its technology from being used to enable mass surveillance or autonomous lethality. The Pentagon pushed back, and over a few weeks, this spiraled into an acrimonious and very public split that included petulant criticism from the president. The Department of War not only signalled it wanted more compliance as it added AI partners, but threatened to kneecap Anthropic by labeling it a “supply chain risk.” In sticking to its guns, so to speak, Anthropic stayed true to its brand as the serious, non-reckless AI company. In general, Silicon Valley seemed to rally around Anthropic, with employees at Google, Microsoft, and Amazon circulating petitions and open letters urging corporate leadership to follow Anthropic’s example and “hold the line” against objectionable government uses of AI. That was the backdrop when OpenAI’s deal with the Pentagon was announced. While the Department of War had already been in talks with various AI firms to add them to classified use cases, the timing of the announcement came across as if OpenAI was effectively replacing Anthropic. While Altman promised the company had the same “red lines” as Anthropic, it agreed to Pentagon language that permits the technology’s use for “all lawful purposes.” OpenAI insists the contract details establish guardrails, and Altman has said Anthropic should be offered the same deal, and should not be tagged as a security risk. But the timing and what some observers saw as capitulation led to a backlash. Aside from online sniping at OpenAI, the results were plain enough in the app charts, as Anthropic downloads and paid subscriptions spiked. The big-tech Information Technology Industry Council, whose members include Nvidia and Apple, weighed in with a letter of concern about “the Department of War’s consideration of imposing a supply-chain risk designation in response to a procurement dispute.” Research firm Sensor Tower found ChatGPT mobile uninstalls jumped 295%. It was almost the Anthropic vs. Pentagon story run in reverse: Instead of a client battle oddly burnishing a brand, a prestigious new-client deal seemed to blow up in a brand’s face. Altman has called the backlash “really painful,” and the result of poor optics rather than any substantial capitulation or opportunism. He reportedly told an all-hands meeting that the deal was a “complex” decision with “extremely difficult brand consequences” in the short term, but ultimately the correct decision. And this may prove right in the long run. Anthropic is back in talks with the Pentagon about salvaging their relationship. And its investors reportedly want to see more diplomacy and less ego from the company; the brand won’t mean much without clients. Meanwhile there’s still plenty of room for OpenAI to be opportunistic, but maybe do a better job at not looking opportunistic—because the best way to avoid “difficult brand consequences” is to anticipate them. View the full article

-

Axel Springer poised to buy Telegraph in £500mn deal

German media group has gatecrashed a proposed acquisition by the owner of the Daily MailView the full article

-

Your employees aren’t disengaged. They’ve got screen fatigue

For the past few years, leaders have been trying to decode what’s happening to attention at work. We’ve debated burnout, quiet quitting, and whether younger employees simply approach productivity differently than previous generations. But new workplace data suggests something far more basic may be happening: many employees aren’t disengaged—they’re visually exhausted. New research from VSP Vision Care and Workplace Intelligence found that desk workers now spend nearly 100 hours each week looking at screens, with most reporting that digital eye strain is directly affecting their productivity. Workers experiencing visual discomfort say it reduces their output by nearly a full workday each week—a number that should give leaders pause. It would be easy to frame this as a wellness story or a benefits conversation. But that misses the bigger picture. The data isn’t just about eye health—it’s about how modern work has been designed, and what leaders choose to normalize. We may be measuring engagement while ignoring endurance When performance dips, organizations often look first at motivation or culture. Are employees committed? Are they resilient enough? Do they care about the work? Those questions matter, but they can distract from a quieter reality: the modern workplace now demands an unprecedented level of visual intensity. According to the research, desk workers spend roughly 93% of their waking weekday hours focused on screens. Think about what that means in practice. Back-to-back video calls. Endless message notifications. Constant toggling between documents, dashboards, and email threads. Even roles that once involved physical movement or conversation have shifted toward screen-based workflows. Over time, that level of visual demand changes how people sustain focus. Fatigue builds slowly, and when it finally shows up as distraction or irritability, leaders often interpret it as disengagement rather than overload. But human attention isn’t limitless. And when work requires uninterrupted visual concentration for hours on end, the issue isn’t necessarily a lack of commitment but rather a lack of thoughtful design. Digital fatigue is a culture issue hiding behind a health statistic One of the most revealing findings in the study isn’t just how much screen time employees report, but how little organizational support exists around it. Only about a third of workers say their company actively encourages eye breaks or provides education about managing digital strain, even though most HR leaders acknowledge more should be done. That gap tells us something important about workplace culture. Many organizations have unintentionally equated productivity with constant digital presence. Being visible online becomes a proxy for being valuable. The result is an environment where stepping away from a screen—even briefly—feels risky. Leaders rarely intend to create this pressure. But when expectations around responsiveness remain unclear, employees fill in the blanks themselves. They stay online longer, respond faster, and push through discomfort to signal commitment. Eventually, that behavior becomes the norm. And when productivity starts to slip, we look for explanations everywhere except the most obvious one: we’ve built a system that asks people to maintain visual intensity longer than is sustainable. Why Gen Z isn’t resisting work—they’re questioning the structure Working closely with Gen Z students and early-career professionals has shown me something important: younger employees aren’t rejecting effort. They’re challenging assumptions about what effective work actually looks like. They question camera-on expectations that prioritize appearances over outcomes. They ask why meetings need to run an hour when decisions could be made in twenty minutes. They push for clearer boundaries around communication instead of constant availability. Some leaders interpret this as impatience or a lack of resilience. I see it differently. Gen Z entered the workforce during a period of rapid digital acceleration, and they’re often the first to notice when systems create friction. Their questions can feel uncomfortable, but they also offer valuable feedback. If an entire generation is pushing back on nonstop screen time, it may be less about generational differences and more about a workplace model that hasn’t caught up with human limits. Leaders don’t need more wellness programs. They need better structure. Addressing digital eye strain doesn’t require a complicated initiative. It requires leaders to rethink how work is organized day to day. That might include: Designing meetings with built-in visual breaks or audio-only segments Leaving intentional gaps between calls so employees can reset their attention Setting clear expectations around response times to reduce the pressure of constant monitoring Modeling healthy digital boundaries rather than praising nonstop availability These shifts may seem small, but they change the message employees receive. Productivity stops being about how long someone stays glued to a screen and starts being about the quality of their contribution. In my own leadership work, I often talk about balancing kindness, fairness, and structure. Visual fatigue sits at the intersection of all three. Acknowledging human limits reflects kindness. Creating clear expectations around availability reflects fairness. And designing workflows that support sustainable focus reflects strong structure. When one of those elements is missing, employees feel it, even if they can’t articulate why. The real risk isn’t eye strain. It’s misreading the signal. When attention dips, leaders often assume disengagement. When someone turns their camera off, we question their commitment. But what if those behaviors aren’t signs of withdrawal at all? What if they’re adaptive responses to an environment that demands more visual endurance than most people can sustain? When leaders misread these signals, they create unnecessary tension. Employees feel misunderstood. Managers feel frustrated. And the real issue—an unsustainable rhythm of work—goes unaddressed. The modern workplace has quietly redefined focus as nonstop visual attention. But people don’t perform at their best by staring at screens longer. They perform better when leaders design work with intention. Digital eye strain isn’t just a health warning. It’s a leadership signal. And the future of work won’t belong to organizations that demand constant presence, but to those that know when it’s time to look up, step back, and lead differently. View the full article