All Activity

- Past hour

-

Google Ads retargeting: A guide to your data segments

One of the most profitable Google Ads targeting tactics is retargeting: showing ads to people who are already familiar with your business. But if you still think that “retargeting” means a Display campaign chasing users around the web with banner ads, you’re missing out on how “Your data segments” actually function today. Let’s explore how you can leverage your proprietary audience data in new ways, and what mistakes to avoid in 2026 and beyond. What are “Your data segments” in Google Ads? Retargeting means showing ads to people who are already familiar with your business. Google uses the euphemistic name “Your data segments” to refer to all the retargeting lists in your account. What types of retargeting can you do in Google Ads? A variety of different retargeting methods are available in Google Ads. They mirror what you’ll find on other ad platforms like Meta, LinkedIn, or TikTok. I find it helpful to group them into four categories: Website Visitors: This is the standard one — people who have visited your website. You collect this data using Google Tag Manager or Google Analytics. App Users: If you have a mobile app, you can pull data from Firebase or other third-party analytics tools into Google Ads for retargeting. Customer Match: This is the “holy grail” of retargeting. You take your business’s first-party data (email addresses, phone numbers, etc.) and upload it directly to Google Ads, so that Google can find those same users across its platforms. Content Engagers: People who have interacted with your content on Google-owned properties. Examples include a segment of users who have watched your YouTube videos, or a segment of users who have clicked through to your site from search results (this is called the Google Engaged Audience, which we explored in another article). Should you upload “your data segments” if you’re not planning to do retargeting? Many practitioners overlook this detail: your data segments aren’t just about ad targeting. Even if you don’t have a single retargeting campaign running, the mere existence of these lists in your account provides a vital signal for Smart Bidding and Optimized Targeting. For example, when you upload a customer list, you’re telling Google, “These are the people who actually buy from me.” Even if you never add that list to your audience signal in Performance Max, Google will still use it to understand likely converters and adjust bidding/targeting accordingly. Similarly, let’s say you only run Search and Shopping campaigns, and you use Target ROAS bidding. When Google is trying to set the right bid for the right user at the right time, their presence (or lack thereof) on a “your data segment” list is one of many signals incorporated into that bidding calculation. How can you use retargeting lists in Google Ads? Different campaign types handle audience data differently. It’s important to know the distinction so you can plan your targeting strategy accordingly. Search, Shopping, Display: In these campaigns, you have three options with Your data segments: Targeting, Observation, Exclusion. Targeting means your ads will only show if the user is a member of your data segment Observation allows you to see your campaign data segmented by list, without narrowing your reach Exclusion means your ads will only show if the user is NOT a member of your data segments. Performance Max and App Campaigns: In these AI-powered campaigns, you can include Your data segments as part of your audience signal. Performance Max recently added the ability to exclude Your data segments as well. Demand Gen: In Demand Gen, you can Target and Exclude Your data segments, but there is no “Observation” option. If you’re new to retargeting, I find Demand Gen the best place to start. It’s built for visual storytelling and works well with the Google Engaged Audience or basic website visitor lists. If you have some experience with retargeting campaigns, you might want to try New Customer Acquisition or Customer Retention mode in PMax or Shopping, as these are powered by Your data segments. What’s the biggest retargeting mistake to avoid? Over-segmenting. I know it can be tempting to create 50 different lists: “People who visited the cart on a Tuesday,” or “People who looked at three pages but didn’t click the ‘About’ section.” Unless you’re spending six figures or more every month, this level of granularity doesn’t help, and may actually hurt your campaigns. Google’s AI needs data density to learn. When you slice your audience into tiny slivers, you don’t have enough “matched records” for the system to optimize. Upload your unique data to Google Ads, keep your strategy simple, and let the bidding algorithms do the heavy lifting in driving returning customers for you. This article is part of our ongoing Search Engine Land series, Everything you need to know about Google Ads in less than 3 minutes. In each edition, Jyll highlights a different Google Ads feature, and what you need to know to get the best results from it – all in a quick 3-minute read. View the full article

-

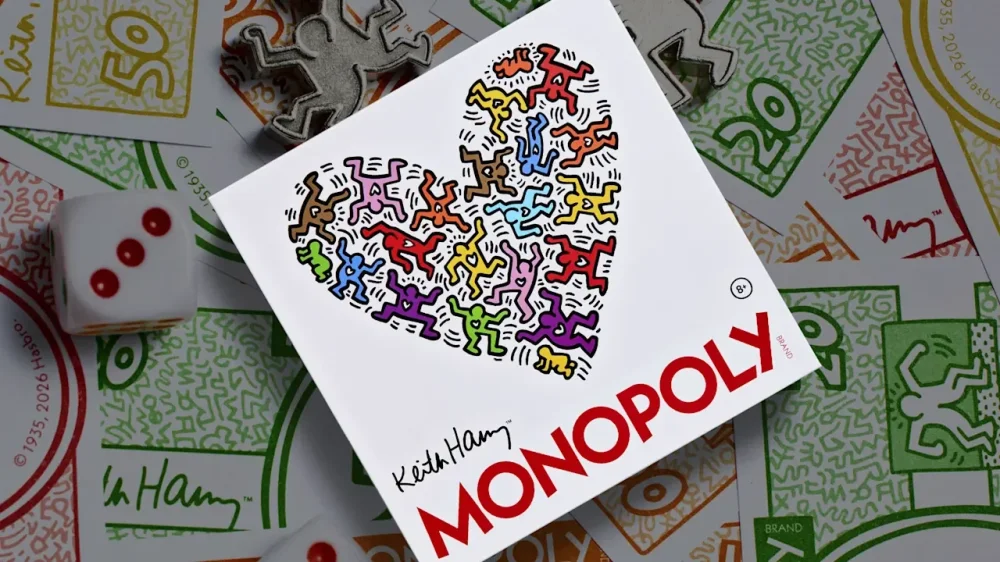

This gorgeous game of Monopoly tells the story of Keith Haring’s life

Since its invention in 1903, the classic Monopoly board game has spawned such a plethora of spin-offs that they nearly span the breadth of all possible human interests. From gardening and beer drinking to the FIFA World Cup, Star Wars, and the city of Edinburgh, Scotland, if you can think of it, there’s a fair chance it’s been turned into a Monopoly game. Now there’s yet another version out there. This one celebrates the life and legacy of artist Keith Haring in a design by WS Game Co., a licensee of Hasbro (Monopoly’s parent company) that specializes in deluxe versions of classic tabletop games. For the 40th anniversary of Haring’s iconic New York City store, Pop Shop, WS Game Co. took inspiration from his portfolio of art, as well as the events of his life, to create the fully customized Keith Haring Monopoly game, now available on its website for $80. For game designers, Monopoly is something of a chameleon: The game’s simple setup, location-based play, and variety of physical pieces make it a perfect canvas for adaptation. The total figure is difficult to nail down, but some Monopoly-heads estimate that more than 1,500 official spin-offs have been produced. The game can bend to fit any universe or story, from a sci-fi epic to an ode to a small town. In this case, it doubles as a display-worthy piece of art. Art that’s become ubiquitous It was only a matter of time before someone decided to create Keith Haring Monopoly, considering how ubiquitous the late artist’s work has become in pop culture and brand collaborations. Since his death in 1990, Haring’s art has appeared on everything from Legos to Dr. Martens and inflatable home decor. For Haring, ubiquity was often the goal. He made his name through graffiti and public art, which he used to spread a message of equality and acceptance to the masses. In 1986, Haring opened the Pop Shop in SoHo, where he sold buttons, stickers, posters, and other prints of his work for ultra-low prices. At the time, the move was intensely criticized by the art world—though now it’s recognized as a distinctly ahead-of-its-time approach. A very Haring board game To capture the legacy of both Haring and his iconic shop in Monopoly form, WS Game Co. worked closely with Artestar, the licensing agency that manages Haring’s estate. Kerry Silva Addis, owner of WS Game Co., says each property on the board represents an important location from Haring’s life, highlighting everything from his birthplace to the schools he attended and the studio spaces he used. “Players travel from his hometown of Kutztown, Pennsylvania, to iconic New York City landmarks and nightclubs, as well as the subway stations where he first gained recognition for his signature white chalk drawings on vacant advertising panels,” Addis says. Naturally, Haring’s art is also incorporated throughout the game. The die-cast metal tokens include 3D versions of his works Radiant Baby and Barking Dog. The houses are actually multicolored dancing figures, and the hotels are boom boxes. The Chance and Community Chest cards have been replaced with “Heart” and “Pop Shop” cards, which feature Haring’s signature heart illustration and the shop’s logo, respectively. Even the game board’s railroad spaces have been replaced with iterations of the drawings Haring posted in NYC subway stations in the 1980s. In an ode to Haring’s distinctive style, the front of the game box includes a heart-shaped gathering of dancing figures that could easily be repurposed as its own piece of mountable artwork. “Throughout the process, it often felt like we weren’t just interpreting his work, but truly collaborating with Keith himself,” Addis says. “Immersing ourselves in his visual language, philosophy, and social impact gave the project a profound sense of purpose. Honoring his legacy in a way that feels genuine was truly a once-in-a-lifetime opportunity.” View the full article

-

The 7.6x Machine: How Grassroots Firms Are Taking Private Equity for a Ride

Fewer than 200 investors triggered almost 900 acquisitions. By CPA Trendlines Go PRO for members-only access to more CPA Trendlines Research. View the full article

-

The 7.6x Machine: How Grassroots Firms Are Taking Private Equity for a Ride

Fewer than 200 investors triggered almost 900 acquisitions. By CPA Trendlines Go PRO for members-only access to more CPA Trendlines Research. View the full article

-

Google Zero Is A Lie

Uncover the truth behind the Google Zero narrative and its implications for traffic from Google Search and Discover. The post Google Zero Is A Lie appeared first on Search Engine Journal. View the full article

- Today

-

How to turn Claude Code into your SEO command center

Lately, I’ve been spending most of my day inside Cursor running Claude Code. I’m not a developer. I run a digital marketing agency. But Claude Code within Cursor has become the fastest way for me to handle many tasks I want to do, including pulling and analyzing data from Google Search Console, GA4, and Google Ads. The setup takes about an hour. After that, you can ask things like “which keywords am I paying for that I already rank for organically?” and get an answer in seconds instead of spending an afternoon with spreadsheets. (I wouldn’t have been the one spending an afternoon with spreadsheets anyway, but now nobody has to.) Here’s the step-by-step process I developed while analyzing data for our agency clients. If this looks too technical, paste the URL of this article into Claude and ask it to walk you through it step by step. What you’re building What you end up with is a project directory where Claude Code has access to Python scripts that pull live data from your Google APIs. You fetch the data, it lands in JSON files, and then you just talk to it. No dashboards to build. No Looker Studio templates to maintain. You’re basically giving Claude Code the same data your team would look at, and letting it do the cross-referencing. seo-project/ ├── config.json # Client details + API property IDs ├── fetchers/ │ ├── fetch_gsc.py # Google Search Console │ ├── fetch_ga4.py # Google Analytics 4 │ ├── fetch_ads.py # Google Ads search terms │ └── fetch_ai_visibility.py # AI Search data ├── data/ │ ├── gsc/ # Query + page performance │ ├── ga4/ # Traffic by channel, top pages │ ├── ads/ # Search terms, spend, conversions │ └── ai-visibility/ # AI citation data └── reports/ # Generated analysis Your customers search everywhere. Make sure your brand shows up. The SEO toolkit you know, plus the AI visibility data you need. Start Free Trial Get started with Step 1: Set up Google API authentication Everything runs through a Google Cloud service account. One service account covers both GSC and GA4, which is nice. Google Ads needs its own OAuth setup, which is less nice but manageable. Service account (for GSC + GA4) Create a project in Google Cloud Console. Enable the Search Console API and Google Analytics Data API. Create a service account under IAM & Admin > Service Accounts. Download the JSON key file. Add the service account email as a user in your GSC property (read access is enough). Add it as a Viewer in your GA4 property. The service account email looks like your-project@your-project-id.iam.gserviceaccount.com. You’ll add this email address to each client’s GSC and GA4 properties, same way you’d add any team member. For agencies: one service account works across all clients. Add it to each property, update a config file with the property IDs, and you’re set. Google Ads authentication Google Ads is different. You need: A developer token from the Google Ads API Center (under Tools & Settings > Setup > API Center). OAuth 2.0 credentials from Google Cloud (not the service account, a separate OAuth client). A one-time browser authentication to generate a refresh token. The developer token requires an application. For agency use, describe it as “automated reporting for marketing clients.” Approval usually takes 24-48 hours. If you’re using a Manager Account (MCC), one developer token and one refresh token cover all sub-accounts. You just change the customer ID per client. If you don’t have API access or MCC, maybe it’s a new client and you’re still getting set up, you can skip the API entirely. Download 90 days of keyword and search terms data as CSVs from the Google Ads UI, drop them in your data directory, and Claude Code will work with those just as well. That’s how we handle clients who aren’t in our MCC yet. Install the Python dependencies All the examples below assume you’re working in the terminal on a Mac or Linux machine. If you’re on Windows, the easiest path is Windows Subsystem for Linux (WSL). pip install google-api-python-client google-auth google-analytics-data google-ads Step 2: Build the data fetchers Each fetcher is a short Python script that authenticates, pulls data, and saves JSON. I didn’t write these from scratch. I described what I wanted to Claude Code and it wrote them. One thing that genuinely surprised me: I never had to read the API documentation. Not for GSC, GA4, or Google Ads. I’d say something like “I want to pull the top 1,000 queries from Search Console for the last 90 days,” and Claude Code would figure out the authentication, endpoints, and query parameters. It already knows these APIs. You just tell it what data you want. Here’s what the scripts look like. Google Search Console fetcher from google.oauth2 import service_account from googleapiclient.discovery import build SCOPES = ['https://www.googleapis.com/auth/webmasters.readonly'] def get_gsc_service(): credentials = service_account.Credentials.from_service_account_file( 'service-account-key.json', scopes=SCOPES ) return build('searchconsole', 'v1', credentials=credentials) def fetch_queries(service, site_url, start_date, end_date): response = service.searchanalytics().query( siteUrl=site_url, body={ 'startDate': start_date, 'endDate': end_date, 'dimensions': ['query'], 'rowLimit': 1000 } ).execute() return response.get('rows', []) You get back queries with clicks, impressions, CTR, and average position. Save it as JSON. GA4 fetcher from google.analytics.data_v1beta import BetaAnalyticsDataClient from google.analytics.data_v1beta.types import ( RunReportRequest, DateRange, Metric, Dimension ) def get_ga4_client(): credentials = service_account.Credentials.from_service_account_file( 'service-account-key.json', scopes=['https://www.googleapis.com/auth/analytics.readonly'] ) return BetaAnalyticsDataClient(credentials=credentials) def fetch_traffic_by_channel(client, property_id, start_date, end_date): request = RunReportRequest( property=f"properties/{property_id}", date_ranges=[DateRange(start_date=start_date, end_date=end_date)], dimensions=[Dimension(name="sessionDefaultChannelGroup")], metrics=[ Metric(name="sessions"), Metric(name="totalUsers"), Metric(name="bounceRate"), ] ) return client.run_report(request) Google Ads fetcher Google Ads uses something called Google Ads Query Language (GAQL). If you’ve ever written a SQL query, this will look familiar. If you haven’t, don’t worry, Claude Code will write it for you: from google.ads.googleads.client import GoogleAdsClient client = GoogleAdsClient.load_from_storage("google-ads.yaml") ga_service = client.get_service("GoogleAdsService") query = """ SELECT search_term_view.search_term, metrics.impressions, metrics.clicks, metrics.cost_micros, metrics.conversions FROM search_term_view WHERE segments.date DURING LAST_30_DAYS ORDER BY metrics.impressions DESC """ response = ga_service.search(customer_id="1234567890", query=query) This pulls the same data as the Search Terms report you’d download from the Google Ads UI: impressions, clicks, cost, conversions, match type, campaign, and ad group. Get the newsletter search marketers rely on. See terms. Step 3: Create a client config One JSON file per client. Nothing fancy, just the property IDs and some context: { "name": "Client Name", "domain": "example.com", "gsc_property": "https://www.example.com/", "ga4_property_id": "319491912", "google_ads_customer_id": "9270739126", "industry": "Higher Education", "competitors": [ "https://competitor1.com/", "https://competitor2.com/" ] } Step 4: Ask cross-source questions So now you’ve got JSON files from GSC, GA4, and Ads sitting in your project directory. Claude Code can read all of them at once and answer questions that would normally mean a lot of tab-switching and VLOOKUP work. The paid-organic gap analysis The single most valuable question I’ve found: “Compare the GSC query data against the Google Ads search terms. Find keywords where we’re paying for clicks but already have strong organic positions. Also, find keywords where we’re spending on ads with zero organic visibility. Those are content gaps.” When I ran this for a higher education client, it identified: 2,742 search terms with wasted ad spend (impressions, zero clicks). 351 opportunities to reduce paid spend on terms where organic was already strong. 33 high-performing organic queries that paid could amplify. 41 content gaps where paid was the only presence (no organic). That analysis took about 90 seconds. The equivalent manual process (downloading CSVs from GSC and Ads, VLOOKUPing across them, categorizing the overlaps) takes most of an afternoon. Other questions worth asking Once you have GSC + GA4 + Ads data loaded: “Which pages get the most impressions in GSC but have low CTR? What’s the traffic from GA4 for those same pages?” (identifies meta description/title opportunities) “What are the top 20 organic queries by impression that we’re not running ads against?” (paid amplification candidates) “Group the GSC queries by topic cluster and show me which clusters have the most impressions but lowest average position.” (content investment priorities) “Which pages in GA4 have high bounce rates but strong GSC positions? Those might need content improvement.” Claude Code isn’t doing anything a human couldn’t do with spreadsheets. It’s doing it in seconds, and you can follow up with another question without rebuilding the whole analysis from scratch. Step 5: Add AI visibility tracking Traditional SERP positions aren’t the whole picture anymore. Between Google’s AI Overviews, AI Mode, Copilot, ChatGPT, and Perplexity, you need to know whether AI systems are citing your content. This is especially true in verticals like higher education, where prospective students increasingly start their research in AI search tools. If you have a tracking platform Tools like Scrunch, Semrush’s AI Visibility toolkit, or Otterly.ai will track your brand’s presence across ChatGPT, Perplexity, Gemini, Google AI Overviews, and Copilot. Export the data as CSV or JSON and drop it in your data directory. Claude Code can then cross-reference AI citations against your GSC and Ads data. When I did this for our own site, we discovered two blog posts competing for the same AI citations on GEO-related queries. One had 12 times as many Copilot citations as the other, despite both targeting similar intent. That led to a consolidation decision we wouldn’t have made based solely on traditional rank data. This kind of AI search cannibalization is something most SEO teams aren’t yet checking for. If you don’t have a tracking platform You don’t need an enterprise tool to start. There are several APIs that let you pull AI search data directly, and the costs are lower than you’d think. DataForSEO AI Overview API: The most accessible option. Pay-as-you-go at about $0.01 per query, with a $50 minimum deposit. You send a keyword, and it returns the full AI Overview content from Google SERPs, including which URLs are cited. It also has a separate LLM Mentions API that tracks how LLMs reference brands across platforms. # DataForSEO AI Overview — simplified example payload = [{ "keyword": "best higher education marketing agencies", "location_code": 2840, # US "language_code": "en" }] response = requests.post( "https://api.dataforseo.com/v3/serp/google/ai_overview/live/advanced", headers=auth_headers, json=payload ) # Returns: AI Overview text, cited URLs, references SerpApi: Starts at $75/month for 5,000 searches. Returns structured JSON for the full Google SERP, including AI Overviews. Good documentation, Python client library, and a free tier for testing. SearchAPI.io: Similar to SerpApi, starts at $40/month. Also offers a separate Google AI Mode API that captures AI-generated answers with citations. Bright Data SERP API: Pay-as-you-go starting around $1.80 per 1,000 requests. Set brd_ai_overview=2 to increase the likelihood of capturing AI Overviews. Also has an MCP server if you want tighter agent integration. Bing Webmaster Tools: Free, and the only first-party AI citation data available from any major platform right now. Shows how often your content appears as a source in Copilot and Bing AI responses, with page-level data and the “grounding queries” that triggered citations. No API yet (Microsoft says it’s on the backlog), but you can export CSVs. DIY: Direct LLM API Calls: The cheapest approach for small-scale monitoring. Write a Python script that sends a consistent set of prompts to the OpenAI, Anthropic, and Perplexity APIs, then parses responses for brand mentions. Perplexity’s Sonar API is especially useful here because it includes web citations in responses, and citation tokens are free. Total cost: under $20/month for a modest prompt library. The general pattern: Pick one SERP API for Google AI Overview data, use Bing Webmaster Tools (it’s free), and supplement with direct LLM API calls or a dedicated tracker if budget allows. The workflow in practice So what does this actually look like on a Tuesday morning? Setup: Once per client, ~15 minutes Add service account email to client’s GSC and GA4 Get their Google Ads customer ID (or export search terms if they’re not in the MCC) Create a config.json with property IDs Monthly data pull: ~5 minutes python3 run_fetch.py --sources gsc,ga4,ads Analysis (as needed): Open Claude Code in the project directory and ask questions. The data is right there. Output: Claude Code generates a markdown report. When I need something client-facing, I push it to Google Docs using a separate tool I built called google-docs-forge. It converts markdown into a properly formatted Google Doc, so the output doesn’t look like it came from a terminal. The whole process takes about 35 minutes for a new client: setup, fetch, analysis. Monthly refreshes take about 20 minutes, including analysis time. Compare that to the manual alternative of downloading CSVs from three different platforms, cross-referencing in spreadsheets, and writing up findings. What this doesn’t replace I don’t want to oversell this. Claude Code is reading your data and finding patterns across sources faster than you can manually. It’s not telling you what to do about those patterns. You still need someone who understands the client’s business, their competitive situation, and what they’re actually trying to accomplish. The tool finds the interesting data. The strategist decides what to do with it. You also need to verify what it gives you. LLMs can hallucinate, and that includes data analysis. I’ve seen Claude Code confidently report a number that didn’t match the JSON file. It’s rare, but it happens. Treat the output like you’d treat work from a new analyst: trust but verify, especially before anything goes to a client. Spot-check the numbers against the source data. If something looks too clean or too dramatic, go look at the raw file. It also doesn’t replace your existing platforms. If you need historical trend data, automated alerts, or a client-facing dashboard, you still want a Semrush or an Ahrefs. What this gives you is the ability to ask ad hoc questions across multiple data sources, which none of those platforms does well on their own. And the GEO/AI visibility tracking space is still immature. The data from AI citation tools is directionally useful. Wind sock, not GPS. Google doesn’t publish AI Overview or AI Mode citation data through any official API, so every third-party tool is approximating. Bing’s Copilot data is the most reliable because it’s first-party, but it only covers the Microsoft ecosystem. See the complete picture of your search visibility. Track, optimize, and win in Google and AI search from one platform. Start Free Trial Get started with Start with GSC, layer in the rest If you want to give this a shot: Start with GSC only. It’s the easiest API to connect (service account, read-only access, free). Fetch your queries and pages for the last 90 days. Ask Claude Code to group queries by topic, identify page-2 ranking opportunities, and find pages with high impressions but low CTR. Add GA4 second. Same service account. Now you can ask cross-source questions: “Which pages rank well in GSC but have high bounce rates in GA4?” Add Google Ads when you’re ready. The OAuth setup is more involved, but the paid-organic gap analysis alone justifies the effort. Layer in AI visibility last. Start with Bing Webmaster Tools (free) and one SERP API for AI Overview data. Each layer builds on the last. You don’t need all four to get value. The GSC + GA4 combination alone surfaces insights that take hours to find manually. View the full article

-

American Express just launched an insanely tiny airport lounge with only 33 seats. Here’s why it says it’s worth squeezing in

Airport lounges are getting bigger, flashier, and increasingly crowded. American Express (Amex) believes the next evolution might actually be smaller. On Wednesday, the company opened the doors to Sidecar by The Centurion Lounge, a new 33-seat speakeasy-style lounge concept at Harry Reid International Airport in Las Vegas. The space is designed specifically for travelers who have 90 minutes or less before boarding, offering a quick stop for food, drinks, and a moment of calm before heading to the gate. The opening represents the first new format for the Centurion Lounge brand since the network debuted more than a decade ago. According to Audrey Hendley, president, global travel and lifestyle services for American Express, the concept emerged after Amex studied how travelers actually use its lounges. “When we looked at customers’ behavior of the lounges, there are a lot of customers who come on their own or with two people with shorter time available but they still want to experience a lounge,” Hendley tells Fast Company. Sidecar was designed to meet that demand. “We designed the lounge in a smaller space to really suit the needs of those types of customers,” Hendley said. “They want to come, they want an elevated experience, they want something to eat, they probably want something to drink and get themselves on their way.” A lounge built for quick visits Unlike traditional airport lounges with amenities like dedicated workspaces and showers designed for longer layovers, Sidecar is meant to function almost like a small restaurant inside the terminal. Travelers can sit at the bar or at small tables and order restaurant-quality food and drinks through a QR code system. Orders are delivered directly by servers, creating a more restaurant-style experience than the buffet approach typical of many airport lounges. “We are really trying to lean into restaurants and create a good experience for customers to maximize their time when they are in the space,” Hendley says. The lounge also operates under tighter time rules than a traditional Centurion Lounge. Eligible cardholders can enter within 90 minutes of their flight departure, which encourages quicker visits and faster turnover. Hendley notes that if your flight ends up getting delayed while you’re inside, you won’t be kicked out when your 90 minutes are up. The new concept sits near Gate D1 in Concourse D, a short walk from the airport’s main Centurion Lounge. The two lounges are designed to complement each other rather than compete. “If you have a longer time, you are probably better off in the other lounge where there is more space,” Hendley says. A chef-driven menu Despite the smaller footprint, American Express is leaning heavily on the culinary reputation of the Centurion brand. Sidecar’s rotating menu features dishes from The Culinary Collective by The Centurion Lounge, a group of chefs whose restaurants span the country and whose cuisines range from Afro Caribbean to Italian. The lineup includes Kwame Onwuachi, Michael Solomonov, Mashama Bailey, and Sarah Grueneberg. Dishes on the opening menu include crushed cucumber salad with crispy rice pearls, avocado toast with schug labneh and black sesame seeds, and mushroom and mustard greens egg bites with black garlic aioli. For Onwuachi, the challenge of designing dishes for an airport lounge versus a restaurant is less about the setting and more about scale. “It’s definitely different, but we just focus on flavor and streamlining a little bit,” Onwuachi said. He said his approach to creating dishes does not change much based on the venue. “We think about good food and we got to figure out how to do it, no matter how big or small the space,” he says. That often means adapting restaurant techniques to work at airport volume while maintaining flavor. “We can’t put caviar and truffles and things like that. But we’re able to still inject flavor into it in many different ways,” Onwuachi said. Recipes are developed in the chefs’ own kitchens before being adapted for the lounge environment. “We create it in our kitchens, we document the recipes, and we send it over,” Onwuachi said. “At the end of the day, they still have a kitchen. It’s just a lot more volume.” A curated wine program Wine will also play a central role in the experience. Sommelier Helen Johannesen, founder of Helen’s Wines and partner in Jon and Vinny’s restaurants, curated the wine list for Sidecar and will oversee wine programs across Centurion Lounges in the United States beginning in spring 2026. Johannesen said she approached the project with the goal of making the wine program feel as intentional as the food. “I created over 200 different wines nationwide for the launch,” she says. “The Centurion Lounge and Sidecar are such an elevated experience. The wine should match that and go with the food.” Her philosophy was to ensure the wines enhance the overall lounge environment rather than simply fill out a menu. “When you are sitting in a gorgeous space like this and drinking a beautiful glass of wine, you should not feel like someone barely thought about it,” Johannesen says. “You should feel like there is intention behind it.” For the Las Vegas location, she curated a smaller, tightly edited list of seven wines designed to appeal to a wide range of travelers passing through the airport. “I thought about how Las Vegas gets a traveler that is more national, so people are coming in and out of Vegas from all over the country,” she said. “I wanted varietals that felt exciting but also universal so there is something for everyone.” That approach includes familiar grapes like Chardonnay and Sauvignon Blanc, but with distinctive producers and styles. At the same time, she said the wine list is designed to complement the food rather than compete with it. Designed with Las Vegas in mind The new lounge is intentionally compact, but American Express leaned into design to make the space feel immersive. Sidecar’s interior draws on an oasis-in-the-desert concept that combines desert-inspired tones with touches of Las Vegas glamour. The space features natural stone surfaces, greenery, brass accents, antique mirrors, and warm lighting, along with subtle touches of American Express blue. The goal is to create a speakeasy-inspired retreat inside the airport that contrasts with the bustle of the terminal. Clark County aviation leadership says the concept also reflects how airports are thinking more strategically about space. According to James C. Chrisley, airports increasingly need to maximize limited terminal space while still delivering a premium traveler experience. The airport lounge arms race Airport lounges used to be a quiet perk for frequent flyers. In 2026, they have become one of the most competitive fronts in the credit card industry. Premium card issuers are investing heavily in airport spaces as a way to win and retain affluent travelers. Lounges have evolved from simple waiting rooms with snacks into physical expressions of a card’s brand. Instead of marketing benefits like points multipliers or statement credits on paper, companies are building spaces where travelers can experience those perks before their trip even begins. American Express has been one of the biggest players in that shift. The Centurion Lounge network, which now includes 32 locations worldwide, helped set the modern standard for credit card lounges with chef partnerships, premium cocktails, and design that feels more like a boutique hotel than an airport terminal. Sidecar represents the next iteration of that strategy. At the same time, competitors are experimenting with their own interpretations of what a premium travel experience should look like. Capital One has leaned heavily into chef-driven food and local partnerships in its lounges, and recently pushed the concept further by opening Capital One Landing at LaGuardia Airport in New York. The space, created with chef José Andrés, functions more like a full restaurant than a traditional lounge, with a large working kitchen and a tapas-style menu cooked from scratch. Chase is also testing new formats within its Sapphire Lounge network, including location-specific concepts designed to reflect the cities they serve. Some spaces emphasize locally inspired menus or distinctive design elements that make the lounge feel like part of the destination rather than just a stop before boarding. The common thread is that the competition has moved beyond simply offering lounge access. Now the question is what kind of experience travelers find when they walk inside. “We learn from all of them,” said Audrey Hendley of the company’s growing network of lounges. “They all have different personalities and different customers who go through them.” The broader goal, she said, is to continue expanding the role American Express plays in the travel journey. “Customers want American Express to help them with their end-to-end travel experience,” Hendley said. “We want to continue to innovate in this space and lead in the lounge space as we have been doing for the last 13 years.” View the full article

-

Target’s turnaround plan isn’t built for this moment

Americans are feeling financially stretched: 92% cut back on spending last year, including curbing essentials like healthcare and groceries. Is this really the time for Target to be focused on trendy throw pillows, luxury beauty products, and premium sodas? At Target’s investor day on Tuesday, CEO Michael Fiddelke tried to convince Wall Street that the retailer is about to undergo a massive turnaround, after years of declining comparable sales, most recently in this last quarter. His reinvention plan is anchored in stylish design, differentiation from other retailers, and delighting the customer in-store. But none of these strategies seemed built for the economic moment we’re currently in. The plan, as laid out by Fiddelke, chief merchandising officer Cara Sylvester, and CFO Jim Lee, involves $1 billion in new investment, 130-plus store remodels, 3,000 new items in the beauty aisle, and a deliberate push to reclaim Target’s identity as the cool, affordable alternative to boring big-box retail. It is, in many respects, a story Target has told before—and that’s the problem. “I’ve seen Target at our best, I’ve seen us when we’re not at our best,” Fiddelke said in response to an analyst who noted that many elements of the current plan looked remarkably similar to what Target attempted a decade ago. “The ingredients that have always fueled us at our best are when we’re design-led, when we’re winning with differentiation, and when our experience is top-notch.” But what worked for Target in the past is unlikely to work now. It’s not just that the retail landscape has evolved, with competitors like Walmart encroaching on Target’s territory with more stylish products. The U.S. is now in the midst of a full-blown affordability crisis, and consumers of all social classes are all looking for cheaper options that will stretch their dollar. In a trade-down economy, Target’s focus on premium products and exciting in-store experiences doesn’t seem like what shoppers need right now. A Playbook Built for Another Era Target’s pitch to investors is rooted in a very specific vision of its customer: Someone who grabs a Starbucks cappuccino on the way in, wanders the aisles in search of something new, and feels good when the product is cheaper than what they saw at Nordstrom. “We want that smile to get bigger when you flip over the price tag and see the value that’s there,” Fiddelke said, describing the aspirational shopping experience that has long defined Target’s brand identity. The problem is that this customer—the one who shops for pleasure, who browses, who reaches for the new—is under enormous financial pressure right now. As U.S. involvement in the Iran conflict sends energy prices climbing and reignites fears of a fresh inflation wave, American consumers are cutting back broadly. According to the 2026 Cost-of-Living Crunch Report, only 12% of workers say their wages have kept pace with inflation, and just 17% feel financially secure enough to save money after spending on essentials. Many Americans are struggling to afford their essentials, with 65% saying these expenses cause stress, and 49% dipping into savings to buy what they need. The notion that these same consumers will resume discretionary spending through refreshed home and apparel aisles—however stylishly merchandised—may be wishful thinking. Yet Target’s turnaround plan leans heavily into exactly those categories. Fiddelke spoke enthusiastically about the profit potential of clothing and home goods, describing them as “high-margin categories” that, when they are “humming on the top line,” generate substantial profit. Sylvester offered the brand’s own-label story as evidence of the value equation at work: “Cat & Jack—phenomenal kids’ clothing brand. We design the leggings with reinforced knees, they’re $5, oh and by the way, you can return it. That is the value equation that we expect of all of our own brands.” (The line generates upwards of $3 billion a year for Target.) On the one hand, it makes sense for Target to spruce up its apparel lines. According to Coresight Research data, Target’s apparel sales fell almost 5% in 2025, when the rest of the market grew 4.8%. Target’s beauty sales were flat, when the total market grew 5%. “Target needs to act to stem its loss of market share,” says John Mercer, head of global research at Coresight Research. But on the other, fashion-forward clothing is a discretionary purchase. And families feeling the financial pinch may be less inclined to buy their kids a wardrobe full of trendy new outfits. They might opt, instead, to buy basics from budget retailers like TJ Maxx or buying secondhand from ThredUp. The Trade-Down Economy Is Real, and Target Is Late to It While Target has spent years navigating several simultaneous crises—including a boycott because it reneged on its DEI policies, and complaints about messy stores and long check-out line—a different cohort of retailers has been quietly gaining ground. Walmart has posted consistent comparable sales growth by doubling down on everyday value, grocery, and online sales. Ulta Beauty, once written off as a niche player, has grown explosively by understanding that customers are trading down from luxury but still want occasional indulgences. The trade-down economy does not mean Americans stop spending. It means they spend more carefully and more deliberately. Value-focused retailers are winning because they are consistently focused on low prices and helping the customer stretch their dollar through promotions and discounts. Target’s proposition, by contrast, has grown murky. The company is trying to be many things simultaneously: a design destination, a grocery stop, a beauty authority, a tech-enabled convenience play. Fiddelke argues that this allows it to differentiate itself from other retailers, which presumably includes its biggest competitor, Walmart. But this seems misguided. Budget-focused retailers have been growing in recent quarters. It might make better sense for Target to take a page from their playbook. A Team of Veterans Fiddelke, who began his career at Target in 2023 as an intern, was named CEO last year. Throughout the call, he argued that his institutional knowledge is a strength. “I feel more aligned as a leadership team and as a company on what our unique path is to win than I’ve probably ever felt in my 23 years,” he said. Nostalgia is a powerful force inside a corporate culture. But a leadership team that has spent decades inside Target—and has absorbed its mythology—might be poorly positioned to reckon honestly with what it needs to become. When investors pressed on whether Fiddelke’s plan was truly different from past attempts, the answers kept circling back to the same touchstones: design, differentiation, delightful experience. These are real strengths. They built a genuinely beloved brand. But they are also a rearview mirror—a map of a landscape that has already changed. The centerpiece of Target’s investment plan is the physical store. Hundreds of millions in added payroll, 130-plus full remodels, expansion into new markets. “The delight when we bring a new Target to a new market,” Fiddelke said, invoking the emotional resonance that a store opening can generate in a community. Lee added that the “bulk” of the $1 billion investment would go toward guest-facing store improvements. The company plans to touch all 2,000 locations with new assortment—more newness, Fiddelke said, “than we’ve seen in any year in the last decade.” Analysts aren’t sure this is the right move. “As a general principle, we are wary of consumer staples retailers pouring money into store remodels when they are losing share to highly price-competitive retailers,” says Mercer of Coresight Research. “It’s an approach that generally hasn’t tended to work well in the past.” Across Target’s existing footprint, the biggest challenge is not aesthetics. It is whether the value proposition—the reason to drive to a Target rather than click to Amazon or swing through Walmart—is compelling enough at a moment when the consumer isn’t looking for indulgence. In the trade-down economy, delight is a luxury. And Target has not yet made the case that it understands this new reality. View the full article

-

5 Essential Tools for Customer Support Management

In today’s competitive environment, effective customer support management is vital for business success. To achieve this, you’ll need the right tools that streamline operations and improve customer interactions. By utilizing help desk software, live chat systems, and knowledge bases, among others, you can enhance service delivery and boost satisfaction. Comprehending these fundamental tools can greatly impact your support strategy. So, what specific tools should you consider implementing for ideal results? Key Takeaways Help Desk Software: Centralizes customer inquiries for efficient tracking and resolution, ensuring no issue goes unaddressed. Live Chat Tools: Facilitate real-time support, significantly increasing customer satisfaction and providing immediate assistance. Knowledge Base Software: Empowers customers to independently find answers, reducing support ticket volume and enhancing self-service options. CRM Tools: Enable personalized interactions, helping maintain strong customer relationships and improving overall service delivery. AI-Powered Tools: Automate repetitive tasks, enhance efficiency, and provide insights through advanced analytics and performance tracking. What Is Customer Support Management? Customer Support Management (CSM) is a strategic approach that focuses on overseeing customer interactions to improve satisfaction and cultivate loyalty. It involves various tools and processes designed to meet customer needs effectively. Key elements of customer support management include case management, which tracks inquiries, and omnichannel support, ensuring customers receive consistent experiences across different platforms. Automation plays an essential role by streamlining repetitive tasks, allowing support teams to focus on more complex issues. In addition, self-service tools empower customers, addressing the fact that 61% prefer solving simple problems independently. Effectively measuring success in CSM typically involves metrics like Customer Satisfaction (CSAT), First Contact Resolution (FCR), and Net Promoter Score (NPS). These metrics help assess the overall customer experience and performance, ultimately influencing customer satisfaction and driving business success. Benefits of Customer Support Tools During the process of maneuvering through the intricacies of customer interactions, utilizing customer support tools can greatly improve your support team’s efficiency and effectiveness. As a customer support manager, you’ll find that these tools streamline processes and allow your agents to focus on complex inquiries instead of repetitive tasks. Here are some key benefits of customer support tools: Faster resolution times: Organizations can resolve issues up to 30% faster with a ticketing system. Increased customer satisfaction: Live chat features can boost customer satisfaction rates by up to 73%. Reduced support ticket volume: Self-service options can cut support tickets by 61%, helping customers find answers independently. Performance tracking: Analytics integration enables tracking of metrics like CSAT and FCR, leading to informed decision-making. Types of Customer Support Tools When traversing the terrain of customer support, comprehending the various types of tools available can greatly boost your team’s effectiveness. A robust customer service management system typically includes help desk software, such as Zendesk and Freshdesk. These tools centralize inquiries, allowing for efficient tracking and resolution of customer issues across multiple channels. Live chat tools, like Crisp and LiveAgent, facilitate real-time communication, improving customer satisfaction through immediate assistance. Knowledge base software, such as Help Scout and Zendesk Guide, empowers customers to find answers independently, markedly reducing support ticket volume. Furthermore, CRM tools enable personalized interactions, ensuring your team can maintain strong customer relationships. Finally, AI-powered tools from Nextiva and Intercom automate repetitive tasks, providing chatbots for instant help and utilizing predictive analytics to boost overall efficiency. Top 5 Customer Support Tools Selecting the right customer support tools can greatly improve your team’s efficiency and effectiveness. Here are five top tools that can boost your customer support team’s performance: Zendesk: A highly customizable platform with advanced reporting and a unified view across channels, suitable for all business sizes. Help Scout: Offers a shared inbox and strong collaboration features, along with AI capabilities to draft email responses, increasing efficiency. Intercom: Focuses on premium AI support, providing extensive knowledge base features, user-friendly inboxes, and custom bot creation for personalized interactions. Zoho Desk: Stands out for its advanced AI features, including a virtual assistant that generates context-aware responses and analyzes customer emotions, improving support quality. These tools can help your customer support team streamline processes, improve communication, and deliver better service. Eventually, this leads to satisfied customers and increased loyalty. How to Choose the Right Customer Support Tool Choosing the right customer support tool can greatly impact your team’s ability to provide effective service and maintain customer satisfaction. As a support manager, start by evaluating your team’s specific needs and workflows. Verify the tool offers vital features like ticketing, live chat, and automation capabilities that align with your operations. Integration is significant; check if the tool can seamlessly connect with existing software, such as CRM systems, to improve efficiency. Examine scalability to accommodate business growth and evolving customer demands, especially if you anticipate increased ticket volumes. Review user feedback and case studies to understand how the tool has benefited similar businesses, focusing on metrics like customer satisfaction (CSAT) and first contact resolution (FCR). Finally, prioritize tools with robust reporting and analytics features, as these provide valuable insights into customer interactions and agent performance, helping you identify areas for advancement in your support processes. Frequently Asked Questions What Are the 7 R’s of Customer Service? The 7 R’s of customer service are essential for effective service delivery. They include: Right Product, ensuring customers get what they ordered; Right Place, for accurate delivery locations; Right Time, focusing on promptness; Right Price, maintaining competitive pricing; Right Quantity, providing the correct amount; Right Quality, ensuring products are in ideal condition; and Right Customer, targeting the appropriate audience. Comprehending these principles helps you improve customer satisfaction and streamline service processes. What Are the 7 Cs of Customer Service? The 7 Cs of customer service are Clarity, Consistency, Capability, Commitment, Courtesy, Creativity, and Communication. Clarity means delivering straightforward information, whereas Consistency guarantees you provide reliable service across all interactions. Capability highlights your team’s knowledge and skills in addressing customer needs. Commitment reflects your dedication to customer satisfaction, and Courtesy involves treating customers with respect. Creativity allows you to solve problems innovatively, and Communication guarantees effective information exchange with customers. What Are CCM Tools? CCM tools, or Customer Communication Management tools, help you deliver personalized and consistent communications to your customers across various channels like email, SMS, and social media. They automate communication processes, track interactions, and manage customer preferences, enhancing engagement. By supporting omnichannel strategies, these tools guarantee a cohesive experience for your customers. Integrating with CRM systems allows for targeted messaging, eventually leading to improved customer loyalty and retention through consistent experiences. What Are the 7 Essentials to Excellent Customer Service? To provide excellent customer service, focus on seven fundamentals: active listening to understand needs, consistency across communication channels, and personalization that acknowledges customer history. Speed and efficiency are critical for timely responses, whereas empowering customers with self-service options boosts satisfaction. Furthermore, a knowledgeable team that can resolve issues quickly contributes greatly. Finally, gathering feedback helps improve services continuously, ensuring you meet evolving customer expectations effectively. Each element plays a significant role in achieving customer satisfaction. Conclusion In summary, effective customer support management relies on crucial tools that streamline operations and improve service delivery. By integrating help desk software, live chat tools, knowledge base software, CRM tools, and AI-powered solutions, you can create a more efficient support system. Each tool plays a key role in addressing customer needs, reducing response times, and nurturing positive relationships. When selecting the right tools, consider your specific requirements to guarantee they align with your organization’s goals and boost overall customer satisfaction. Image via Google Gemini This article, "5 Essential Tools for Customer Support Management" was first published on Small Business Trends View the full article

-

5 Essential Tools for Customer Support Management

In today’s competitive environment, effective customer support management is vital for business success. To achieve this, you’ll need the right tools that streamline operations and improve customer interactions. By utilizing help desk software, live chat systems, and knowledge bases, among others, you can enhance service delivery and boost satisfaction. Comprehending these fundamental tools can greatly impact your support strategy. So, what specific tools should you consider implementing for ideal results? Key Takeaways Help Desk Software: Centralizes customer inquiries for efficient tracking and resolution, ensuring no issue goes unaddressed. Live Chat Tools: Facilitate real-time support, significantly increasing customer satisfaction and providing immediate assistance. Knowledge Base Software: Empowers customers to independently find answers, reducing support ticket volume and enhancing self-service options. CRM Tools: Enable personalized interactions, helping maintain strong customer relationships and improving overall service delivery. AI-Powered Tools: Automate repetitive tasks, enhance efficiency, and provide insights through advanced analytics and performance tracking. What Is Customer Support Management? Customer Support Management (CSM) is a strategic approach that focuses on overseeing customer interactions to improve satisfaction and cultivate loyalty. It involves various tools and processes designed to meet customer needs effectively. Key elements of customer support management include case management, which tracks inquiries, and omnichannel support, ensuring customers receive consistent experiences across different platforms. Automation plays an essential role by streamlining repetitive tasks, allowing support teams to focus on more complex issues. In addition, self-service tools empower customers, addressing the fact that 61% prefer solving simple problems independently. Effectively measuring success in CSM typically involves metrics like Customer Satisfaction (CSAT), First Contact Resolution (FCR), and Net Promoter Score (NPS). These metrics help assess the overall customer experience and performance, ultimately influencing customer satisfaction and driving business success. Benefits of Customer Support Tools During the process of maneuvering through the intricacies of customer interactions, utilizing customer support tools can greatly improve your support team’s efficiency and effectiveness. As a customer support manager, you’ll find that these tools streamline processes and allow your agents to focus on complex inquiries instead of repetitive tasks. Here are some key benefits of customer support tools: Faster resolution times: Organizations can resolve issues up to 30% faster with a ticketing system. Increased customer satisfaction: Live chat features can boost customer satisfaction rates by up to 73%. Reduced support ticket volume: Self-service options can cut support tickets by 61%, helping customers find answers independently. Performance tracking: Analytics integration enables tracking of metrics like CSAT and FCR, leading to informed decision-making. Types of Customer Support Tools When traversing the terrain of customer support, comprehending the various types of tools available can greatly boost your team’s effectiveness. A robust customer service management system typically includes help desk software, such as Zendesk and Freshdesk. These tools centralize inquiries, allowing for efficient tracking and resolution of customer issues across multiple channels. Live chat tools, like Crisp and LiveAgent, facilitate real-time communication, improving customer satisfaction through immediate assistance. Knowledge base software, such as Help Scout and Zendesk Guide, empowers customers to find answers independently, markedly reducing support ticket volume. Furthermore, CRM tools enable personalized interactions, ensuring your team can maintain strong customer relationships. Finally, AI-powered tools from Nextiva and Intercom automate repetitive tasks, providing chatbots for instant help and utilizing predictive analytics to boost overall efficiency. Top 5 Customer Support Tools Selecting the right customer support tools can greatly improve your team’s efficiency and effectiveness. Here are five top tools that can boost your customer support team’s performance: Zendesk: A highly customizable platform with advanced reporting and a unified view across channels, suitable for all business sizes. Help Scout: Offers a shared inbox and strong collaboration features, along with AI capabilities to draft email responses, increasing efficiency. Intercom: Focuses on premium AI support, providing extensive knowledge base features, user-friendly inboxes, and custom bot creation for personalized interactions. Zoho Desk: Stands out for its advanced AI features, including a virtual assistant that generates context-aware responses and analyzes customer emotions, improving support quality. These tools can help your customer support team streamline processes, improve communication, and deliver better service. Eventually, this leads to satisfied customers and increased loyalty. How to Choose the Right Customer Support Tool Choosing the right customer support tool can greatly impact your team’s ability to provide effective service and maintain customer satisfaction. As a support manager, start by evaluating your team’s specific needs and workflows. Verify the tool offers vital features like ticketing, live chat, and automation capabilities that align with your operations. Integration is significant; check if the tool can seamlessly connect with existing software, such as CRM systems, to improve efficiency. Examine scalability to accommodate business growth and evolving customer demands, especially if you anticipate increased ticket volumes. Review user feedback and case studies to understand how the tool has benefited similar businesses, focusing on metrics like customer satisfaction (CSAT) and first contact resolution (FCR). Finally, prioritize tools with robust reporting and analytics features, as these provide valuable insights into customer interactions and agent performance, helping you identify areas for advancement in your support processes. Frequently Asked Questions What Are the 7 R’s of Customer Service? The 7 R’s of customer service are essential for effective service delivery. They include: Right Product, ensuring customers get what they ordered; Right Place, for accurate delivery locations; Right Time, focusing on promptness; Right Price, maintaining competitive pricing; Right Quantity, providing the correct amount; Right Quality, ensuring products are in ideal condition; and Right Customer, targeting the appropriate audience. Comprehending these principles helps you improve customer satisfaction and streamline service processes. What Are the 7 Cs of Customer Service? The 7 Cs of customer service are Clarity, Consistency, Capability, Commitment, Courtesy, Creativity, and Communication. Clarity means delivering straightforward information, whereas Consistency guarantees you provide reliable service across all interactions. Capability highlights your team’s knowledge and skills in addressing customer needs. Commitment reflects your dedication to customer satisfaction, and Courtesy involves treating customers with respect. Creativity allows you to solve problems innovatively, and Communication guarantees effective information exchange with customers. What Are CCM Tools? CCM tools, or Customer Communication Management tools, help you deliver personalized and consistent communications to your customers across various channels like email, SMS, and social media. They automate communication processes, track interactions, and manage customer preferences, enhancing engagement. By supporting omnichannel strategies, these tools guarantee a cohesive experience for your customers. Integrating with CRM systems allows for targeted messaging, eventually leading to improved customer loyalty and retention through consistent experiences. What Are the 7 Essentials to Excellent Customer Service? To provide excellent customer service, focus on seven fundamentals: active listening to understand needs, consistency across communication channels, and personalization that acknowledges customer history. Speed and efficiency are critical for timely responses, whereas empowering customers with self-service options boosts satisfaction. Furthermore, a knowledgeable team that can resolve issues quickly contributes greatly. Finally, gathering feedback helps improve services continuously, ensuring you meet evolving customer expectations effectively. Each element plays a significant role in achieving customer satisfaction. Conclusion In summary, effective customer support management relies on crucial tools that streamline operations and improve service delivery. By integrating help desk software, live chat tools, knowledge base software, CRM tools, and AI-powered solutions, you can create a more efficient support system. Each tool plays a key role in addressing customer needs, reducing response times, and nurturing positive relationships. When selecting the right tools, consider your specific requirements to guarantee they align with your organization’s goals and boost overall customer satisfaction. Image via Google Gemini This article, "5 Essential Tools for Customer Support Management" was first published on Small Business Trends View the full article

-

Update Your Android ASAP to Patch These 129 Security Flaws

Google has released its Android Security Bulletin for March with patches for 129 vulnerabilities, one of which is a zero-day flaw in a Qualcomm display component that may be under "targeted, limited exploitation." The latest update also fixes 10 critical severity bugs across Android components. CVE-2026-0006 is a remote code execution vulnerability in the System component that attackers could exploit with no additional privileges or user interaction. CVE-2025-48631 is a denial-of-service flaw in System, while CVE-2026-0047 is an escalation of privilege vulnerability in Framework. There are seven critical escalation of privilege flaws being patched in Kernel components. Google is also addressing issues in Qualcomm, MediaTek, Arm, Misc OEM, Unisoc, and Imagination Technologies components, which may not affect all Android devices. One zero-day patchedThe zero-day patched with this security update is as an integer overflow or wraparound in a Qualcomm Graphics subcomponent that allows local attackers to trigger memory corruption. The vulnerability—labeled CVE-2026-21385—affects 235 Qualcomm chipsets. According to Qualcomm's own security advisory, the vulnerability was reported on Dec. 18, 2025 through the Google Android Security team, with customers notified on Feb. 2, 2026. Update your Android ASAPAndroid users should install the latest security patch as soon as it becomes available—you should get a notification prompting you to do so. Google pushes updates for its own Pixel devices and the core Android Open Source Project (AOSP) code, while other manufacturers release patches for their respective devices around the same time. If you have a Samsung, Motorola, or Nokia, for example, you may experience a slight delay. There are two patch levels labeled as 2026-03-01 and 2026-03-05, the latter of which fixes all issues included in the former. This month's patches apply to AOSP versions 14, 15, 16, and 16-qpr2. You can check for available updates via Settings > Security & privacy > System & updates > Security update. View the full article

-

Bitcoin, XRP, and other crypto prices are rising today. What Trump said on Truth Social to boost digital assets

Bitcoin and other cryptocurrencies have had a rough 2026 so far. BTC itself is currently down more than 18% year-to-date, and other major tokens have performed even worse, such as XRP, which has lost more than 23% of its value since the year began, and Ethereum, which has declined 30%. But today, it seems like their fortunes may be reversing. Bitcoin is currently up nearly 5%, and XRP and Ethereum are up over 2% and 3%, respectively. One of the contributing factors to this turnaround may be the comments made by President The President on his Truth Social network last night. Here’s what he said and what you need to know. What’s happened? Yesterday evening, The President took a break from focusing on Iran to briefly return to a subject closer to home: cryptocurrency legislation. In a post on Tuesday evening, The President railed against America’s big banks, alleging that they were trying to undermine the Genius Act, which passed into law last year, and warned that they should not try to hold the not-yet-passed Clairity Act hostage. Both acts are designed to bring regulatory certainty to the cryptocurrency market, which could boost investor confidence in the digital tokens as legitimate and relatively safe assets. The GENIUS Act legislated that stablecoin issuers had to hold $1 in assets for every stablecoin they issued; however, the act forbade stablecoin issuers from offering owners of stablecoins a yield on them—essentially interest on their stablecoin holdings. The Clairity Act, which has not yet been passed into law, will specify which regulator oversees the broader crypto industry and will determine whether the Commodity Futures Trading Commission (CFTC) or the Securities and Exchange Commission (SEC) will be the regulator. The CFTC is generally seen as the more crypto-friendly agency, which could greatly benefit those in the crypto industry. In addition, the Clairty Act will also legislate whether third parties can offer a yield to owners on their stablecoins. In other words, the Claiity Act may get around the Genius Act ban on issuer-offered stablecoin yields by allowing third parties, such as exchanges like Coinbase, to offer yields. And this possibility of a yield allowance has banks worried. Why are banks worried about stablecoin yields? In short, because a yield on stablecoins could be a direct threat to one of the big banks’ biggest income sources: your savings. Currently, when you plop your money into a traditional savings account, the bank holds only a small portion of it. They lend the rest out to businesses or individuals who pay them relatively high interest on the loans. But the banks don’t pass most of this interest on your loaned savings to you. While the bank may receive 5% to 7% interest payments on your loaned savings, they generally give you only about 0.5% to 2% in interest on your savings to you. The banks keep the rest of the profits. But if third-party exchanges can offer yields, or rewards, on a person’s stablecoin holdings—and those yields are significantly higher, say, in the 4-5% range, the banks worry that many individuals will pull their savings from the banks’ low-interest savings accounts and put them into stablecoin accounts. If this happens, banks lose your money to lend, and they also lose the interest profit from your loaned savings. It’s because of this that the banking industry is reportedly fighting aspects of the Clarity Act behind closed doors, and as CoinDesk notes, that is one of the reasons the act is currently being held up. What did The President say? In a post on Truth Social yesterday evening, The President attacked America’s banks, accusing them of undermining the Genius Act and his administration’s “powerful Crypto Agenda.” “Americans should earn more money on their money,” The President wrote. “The Banks are hitting record profits, and we are not going to allow them to undermine our powerful Crypto Agenda that will end up going to China, and other Countries if we don’t get The Clarity Act taken care of. “The Banks should not be trying to undercut The Genius Act, or hold The Clarity Act hostage,” the president continued. “They need to make a good deal with the Crypto Industry because that’s what’s in best interest of the American People. This Industry cannot be taken from the People of America when it is so close to becoming truly successful.” In the hours after The President’s post, major cryptocurrencies started to rise. Why is crypto rising today? The President’s posts have the power to move markets, and it seems that his rant against the banks—and in support of the crypto industry—last night is at least contributing to some of the rise we are seeing in crypto markets this morning. While the banks have a particular issue with the Clairty Act’s stablecoin yield ambitions, the act has many parts, and its passage should generally be a net positive for the broader crypto industry—whether that’s stablecoins or traditional cryptocurrencies like Bitcoin and XRP. The act may be seen as being more likely to pass the more The President keeps talking about it. As of the time of this writing, most major cryptocurrencies are seeing significant gains over the past 24 hours, including: Bitcoin: up 4.4% to $70,896 Ethereum: up 3.1% to $2,050 BNB: up 3.3% to $650 XRP: up 2.3% to $1.39 Of course, The President’s post is likely not the only factor behind crypto’s rise today. The digital tokens have taken a beating in recent weeks, so it’s possible that another contributing factor to their upward swing is investors “buying the dip.” That dip was exacerbated by asset selloffs primarily related to geopolitical uncertainties since the year began, including America’s attack on Venezuela, The President’s threat to take Greenland, and now, America’s conflict with Iran. In any case, crypto investors will be happy to see digital tokens trending upward this morning. Whether they stay up in the days and weeks ahead, on the other hand, is unknowable. View the full article

-

Partner of Labour MP among three arrested in UK on suspicion of spying for China

London’s Metropolitan Police said officers had searched addresses in London, Wales and East KilbrideView the full article

-

These Are the Best Kinds of Exercise for Losing Weight